A 1998 Encyclical by Pope John Paul II was titled “Faith and Reason.” Actually condemning pure faith as the basis for religious belief — claiming it’s instead supported by reason and science. How pretty to think that.

Mark Twain defined faith as “believing what you know ain’t so.” But what does the word “know” there mean? How knowledge and belief work in one’s brain can be tricky. Nobody “believes” something they “know” is false. But some things people resist knowing. Many believe — or think they believe — they’ll go to paradise after death. Yet aren’t keen to depart. How do we unpack that “belief?”

Occam’s Razor (named for 14th century thinker William of Occam, or Ockham) tells us that among competing explanations, the simplest, with the fewest assumptions or moving parts, is the likeliest. The Daily Show has aptly called it Occam’s Giant Fucking Machete. Because it’s so powerful.

At a gathering of friends, one (an atheist) touted an alien abduction tale he thought compellingly persuasive — witnessed, indeed, by a UN Secretary-General! Wow! Well, there were two basic alternatives:

1) The story was true (despite violating laws of physics in its details, as well as ones making interstellar travel itself virtually impossible); or

2) The story’s “facts” were false.

Applying Occam’s razor, the latter was the simplest explanation. After all, we know people make stuff up all the time. A little googling quickly confirmed this.

Another friend, advocating for Christianity, once gave me a book he felt sure must convince me, titled Who Moved the Stone? Relating Jesus’s entombment and resurrection, answering all conceivable objections to that narrative.

Except one. That the events in question — described (inconsistently!) in the gospels— simply never happened. This flummoxed my friend. As with that alien abduction story, the commonest application of Occam’s razor is to question whether asserted facts are true in the first place. Their falsity often being the simplest explanation for some seemingly puzzling phenomenon.

Occam also debunks your typical conspiracy theory — Sandy Hook, 9/11, the JFK assassination, Roswell, the Moon landing. All predicated upon legions of people in on the conspiracy and able to conceal it over decades. Utterly implausible.

Back to faith. Mark Twain’s take is, again, an oversimplification. What does “faith” really mean? We have faith and trust in how others will generally behave. But of course that sort of faith is grounded in a lifetime of experience supporting its validity. And when we lack such experiential basis, we deploy Reagan’s “trust but verify.”

All this is using our reason, it’s not faith in the religious sense. Whose very concept eschews any idea of supportive evidence. The whole point is to believe in disregard thereof. Transcending such grubby worldliness and ascending to some holier plane. Or something like that.

Convenient if you’re trying to sell people doctrines that flout actual experience and knowledge. Like the con artist saying, “Who ya gonna believe, me or your lying eyes?”

And yet, much as they persuade themselves into this “faith” paradigm innocent of evidence, religious folks nevertheless grab onto bits of evidence they can somehow construe as corroborating that faith. To assuage that part of the brain unable to make itself wholly abjure reason and go all-in with faith. It’s contradictory, schizophrenic even.

Steven Pinker has said, “I don’t believe in anything you have to believe in.” Scientists are sometimes asked if they really “believe” in evolution. But it’s not something they “believe in” — it’s something they believe. A crucial difference. Belief not from choice, but because facts compel it. Reason means forming beliefs from facts; faith means overriding them.

Some young earth creationists wave off geology and fossils as concocted by God just to trick us and test our faith. Talk about conspiracy theories! Not merely renouncing evidence, but torturing it. Takes an awful lot of work to keep those faith balls in the air.

I apply Occam’s razor regarding religion, with two basic possibilities: 1) It’s true. (Well, Christianity, though not of course Hinduism or those thousand other faiths.) Despite a world looking exactly as we should expect were there no god. Or — 2) It was all made up by fallible credulous people based on primitive superstitions.

A no-brainer.

Betraying Enlightenment hopes of rising rationalism, humanity still flounders in a quicksand of the supernatural and paranormal. Why? Believing whatever you like may seem a form of self-empowerment. Then there’s falling trust in institutions generally — mainstream science prominently among them. Yet many who vaunt skepticism abandon it for sketchy hucksters who veritably scream untrustworthiness. Fauci versus Alex Jones? Use Occam’s Razor, for God’s sake. Or for Darwin’s.

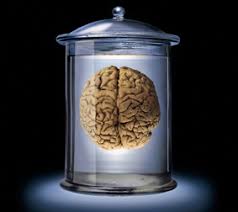

Stephen Hawking had a horrible illness, given only a few years to live.

Stephen Hawking had a horrible illness, given only a few years to live. All he had was his brain. But what a brain.

All he had was his brain. But what a brain. Which, undaunted, he employed to get on with his life and his calling.

Which, undaunted, he employed to get on with his life and his calling. (And why would God create ALS?)

(And why would God create ALS?)