Combination of Concerns

If you are interested in more than two of these …

… you’ll surely enjoy this:

Hands-on introduction to Object Teams

| See you at |  |

Null annotations: prototyping without the pain

So, I’ve been working on annotations @NonNull and @Nullable so that the Eclipse Java compiler can statically detect your NullPointerExceptions already during compile time (see also bug 186342).

By now it’s clear this new feature will not be shipped as part of Eclipse 3.7, but that needn’t stop you from trying it, as I have uploaded the thing as an OT/Equinox plugin.

Behind the scenes: Object Teams

Today’s post shall focus on how I built that plugin using Object Teams, because it greatly shows three advantages of this technology:

- easier maintenance

- easier deployment

- easier development

Before I go into details, let me express a warm invitation to our EclipseCon tutorial on Thursday morning. We’ll be happy to guide your first steps in using OT/J for your most modular, most flexible and best maintainable code.

Maintenance without the pain

It was suggested that I should create a CVS branch for the null annotation support. This is a natural choice, of course. I chose differently, because I’m tired of double maintenance, I don’t want to spend my time applying patches from one branch to the other and mending merge conflicts. So I avoid it wherever possible. You don’t think this kind of compiler enhancement can be developed outside the HEAD stream of the compiler without incurring double maintenance? Yes it can. With OT/J we have the tight integration that is needed for implementing the feature while keeping the sources well separated.

The code for the annotation support even lives in a different source repository, but the runtime effect is the same as if all this already were an integral part of the JDT/Core. Maybe I should say, that for this particular task the integration using OT/J causes a somewhat noticable performance penalty. The compiler does an awful lot of work and hooking into this busy machine comes at a price. So yes, at the end of the day this should be re-integrated into the JDT/Core. But for the time being the OT/J solution well serves its purpose (and in most other situations you won’t even notice any impact on performance plus we already have further performance improvements in the OT/J runtime in our development pipeline).

Independent deployment

Had I created a branch, the only way to get this to you early adopters would have been via a patch feature. I do have some routine in deploying patch features but they have one big drawback: they create a tight dependency to the exact version of the feature which you are patching. That means, if you have the habit of always updating to the latest I-build of Eclipse I would have to provide a new patch feature for each individual I-build released at Eclipse!

Not so for OT/Equinox plug-ins: in this particular case I have a lower bound: the JDT/Core must be from a build ≥ 20110226. Other than that the same OT/J-based plug-in seemlessly integrates with any Eclipse build. You may wonder, how can I be so sure. There could be changes in the JDT/Core that could break the integration. Theoretically: yes. Actually, as a JDT/Core committer I’ll be the first to know about those changes. But most importantly: from many years’ experience of using this technology I know such breakage is very seldom and should a problem occur it can be fixed in the blink of an eye.

As a special treat the OT/J-based plug-in can even be enabled/disabled dynamically at runtime. The OT/Equinox runtime ships with the following introspection view:

Simply unchecking the second item dynamically disables all annotation based null analysis, consistently.

Enjoyable development

The Java compiler is a complex beast. And it’s not exactly small. Over 5 Mbytes of source spread over 323 classes in 13 packages. The central package of these (ast) comprising no less than 109 classes. To add insult to injury: each line of this code could easily get you puzzling for a day or two. It ain’t easy.

If you are a wizard of the patches feel free to look at the latest patch from the bug. Does that look like s.t. you’d like to work on? Not after you’ve seen how nice & clean things can be, I suppose.

First level: overview

Instead of unfolding the package explorer until it shows all relevant classes (at what time the scrollbar will probably be too small to grasp) a quick look into the outline suffices to see everything relevant:

Here we see one top-level class, actually a team class. The class encapsulates a number of role classes containing the actual implementation.

Navigation to the details

Each of those role classes is bound to an existing class of the compiler, like:

protected class MessageSend playedBy MessageSend { ...

MessageSend denotes a role class in the current team, whereas the second MessageSend refers to an existing base class imported from some other package).

Ctrl-click on the right-hand class name takes you to that base class (the packages containing those base classes are indicated in the above screenshot). This way the team serves as the single point of reference from which each affected location in the base code can be reached with a single mouse click – no matter how widely scattered those locations are.

When drilling down into details a typical roles looks like this:

The 3 top items are  “callout” method bindings providing access to fields or methods of the base object. The bottom item is a regular method implementing the new analysis for this particular AST node, and the item above it defines a

“callout” method bindings providing access to fields or methods of the base object. The bottom item is a regular method implementing the new analysis for this particular AST node, and the item above it defines a  “callin” binding which causes the new method to be executed after each execution of the corresponding base method.

“callin” binding which causes the new method to be executed after each execution of the corresponding base method.

Locality of new information flows

Since all these roles define almost normal classes and objects, additional state can easily be introduced as fields in role classes. In fact some relevant information flows of the implementation make use of role fields for passing analysis results directly from one role to another, i.e., the new analysis mostly happens by interaction among the roles of this team.

Selective views, e.g., on inheritance structures

As a final example consider the inheritance hierarchy of class Statement: In the original this is a rather large tree:

Way too large actually to be fully unfolded in a single screenshot. But for the implementation at hand most of these

classes are irrelevant. So at the role layer we’re happy to work with this reduced view:

This view is not obtained by any filtering in the IDE, but that’s indeed the real full inheritance tree of the role class Statement. This is just one little example of how OT/J supports the implementation of selective views. As a result, when developing the annotation based null analysis, the code immediately provides a focused view of everything that is relevant, where relevance is directly defined by design intention.

A tale from the real world

I hope I could give an impression of a real world application of OT/J. I couldn’t think of a nicer structure for a feature of this complexity based on an existing code base of this size and complexity. Its actually fun to work with such powerful concepts.

Did I already say? Don’t miss our EclipseCon tutorial 🙂

Hands-on introduction to Object Teams

| See you at |  |

Bye, bye, NPE

As I announced earlier most of my time for the JDT currently goes into static analysis, more specifically into analyzing various issues relating to null references.

Since the compiler can’t read our minds on whether we intend to allow null for a method parameter, a method return etc. we should give it a little help: annotate types in method signatures with either @Nullable or @NonNull, thus creating a contract between a method and its callers.

With such contracts it is possible to write code that is provably free of NPEs — wouldn’t that be great?

Let null contracts become mainstream

The theory and some tools for checking null contracts have been around for a while. I think it is time that the Eclipse Java compiler will make this feature directly available within your favorite IDE.

Towards this goal work in bug 186342 has made good progress, but some issues still need discussing and experimenting before this feature can be released into the JDT.

First prototype available

The current prototype is available for download — see these steps for installing into a fresh Eclipse SDK 3.7 M5.

What the prototype supports:

- @NonNull and @Nullable annotations for method parameters, method return and local variables, seemlessly integrated with the existing flow analysis of the JDT compiler.

- Inheritance of null contracts with the option of compatible refinement in subtypes.

- Configuration of a global default (either @NonNull or @Nullable) to reduce the number or required annotations.

- Configuration of specific qualified names for these annotation types to support compatibility with (some) other tools

- A few quickfixes for adding obviously missing annotations.

If you like to play with these existing features you’re highly welcome to install the plugin and give feedback in the bug.

If you want to see the full story you may want to wait for some more items from my agenda:

- Null annotations for fields

- Nullity profiles for libraries

- Configurability of the default annotation per package and per type.

- More quickfixes

Behind the scenes

There’s a second piece of good news in this development: before this feature gets approved for committing into JDT head, I was faced with the usual choice to either evolve the feature outside CVS or create a branch. Since I didn’t like the efforts incurred by either approach – regarding maintenance as well as deployment – I chose the third option: Object Teams.

I transformed my recent patch from the bug into a regular OT/Equinox plug-in. The Object Teams based solution not only avoids the double maintance associated with branching but also makes the specific code for this feature much easier to read because it is localized in exactly one module. Also, the plug-in can be easier comsumed because it can be packaged and deployed independently of the concurrent evolution of the JDT.

By the way: those interested in these and other cool things that can be done with Object Teams: don’t miss our EclipseCon tutorial:

Hands-on introduction to Object Teams

| See you at |  |

A Short Train Ride

Less than a week ago I happily announced that Object Teams is on the Indigo Train.

Much water has gone under the bridge since then and the above statement is history.

Events where triggered by what was actually a little bug in the b3 aggregator. Only by way of this bug some people noticed that there was a ”’patch feature”’ inside the repository, i.e., a feature (“Object Teams Patch for JDT/Core”) that replaces the jdt.core plugin with a variant.

One part of me is very happy this bug occurred because finally an issue got the attention I had tried to raise at various occasions before. The lesson is:

(see this post, e.g.).

The other part of me got very worried because during that debate some harsh statements occurred that would effectively amount to excluding Object Teams from Eclipse.org. That’s a little more attention than I had intended. As in any heated debate some of the arguments sounded to me more like ideology than anything that could possibly be discussed open-mindedly.

I had mixed feelings regarding the technical scope: the outcry only banned one specific technology: patch features. I don’t see how the goal to protect a project’s bits and bytes against influence from other projects can be achieved without also discussing: access to internal, byte-code weaving and – worst of all, I believe – reflection. To be perfectly open: Object Teams uses all these techniques except for reflection. Personally, I would even argue for banning projects that do use setAccessible(true), but that’s not a realistic option because then quite likely the whole Train would dissolve into a mist.

I am actually guilty of a technical simplification during this debate: I focused too much on the idea that a user would explicitly select features to install, not accounting for the possibility that the jdt.core plugin can well be pulled in invisibly due to dependencies among plugins. So, yes, if Object Teams would still be in the repository, and if a user installed a package without the JDT and if that user did never select to install the JDT and if that user selects another feature that implicitly requires the jdt.core plugin, then that user would unexpectly install the OT variant of the jdt.core. I agree that this is not ideal. I personally would have been happy to take this risk because I know how thoroughly the OT variant is tested for compatibility. And for the remaining minuscule risk I would have been happy to promise same-day fixes. But risk assessment naturally depends on perspective and I understand that others come to different conclusions when weighing the issues.

From two days distance I can already laugh at one implication of the central argument, paraphrased as: the JDT/Core team must be protected against harmful actions from the OT team. When spelling this out in names, one of the sentences reads: “Stephan Herrmann must be protected against harmful actions by Stephan Herrmann”. I should really be careful, because I’ll never be able to escape him!

Where to go?

- As we’re banned from the Train, I willed hurriedly book a plane ticket to Indigo. Make sure we come by a Graduation office on the way.

- I do hope that bug 316702 get’s sufficient attention now. Seriously: if you are so detrimentally determined about patch features, then the UI must report it. And if it reports this, it might as well report other techniques that have similar effects!

- I appreciate any offers for helping OT/J towards a solution that avoids replacing a plugin. As of today and after more than seven years of looking at this, I see no way how this can be done, but that doesn’t mean we shouldn’t try still harder.

|

Unfortunately, the debate consumed all the time and energy I had planned for preparing a presentation at the EclipseCON Audition. However, Lynn finally made my day by letting me know that my submission is the lucky #42. So I’m making progress towards my all-seasons Eclipse collection 🙂 |

Object Teams is on the Train to Indigo

Object Teams made the next step for even better integration in Eclipse: starting with today’s Indigo Milestone 3 we’re aboard! No further fiddling with URLs of update sites, simply select the Indigo site…

… and directly install the OTDT from there.

For those who haven’t tried Object Teams before, here are steps 1, 2 & 3:

- Walk through the Quick Start

- Read the basic concepts in our OT/J Primer

- Play with more Examples or browse some Object Teams Patterns

Improvements

The main concern of this milestone release was catching up with the Indigo train, so the list of bugs fixed is shorter than usual. But, hey, by upgrading to Eclipse SDK 3.7M3, the OTDT supports also some new JDT coolness, like, e.g., improved static analysis (I happen to have a finger in this pie, too 🙂 ).

Compatiblity

With each new release it is good to test compatibility with older software. So I loaded my previous experiment (“project medal”) into the new milestone. Much as I expected: that little adaptation of the JDT still works as intended also in the new version, which wasn’t possible with the original implementation. This again shows: in-place patching can never give you, what OT/Equinox excels in: evolvable, modular adaptation.

Let’s talk straight with our users

Users of Eclipse trust in its quality. But what happens when they install additional plug-ins that are not part of the original download package? We tend to say that plug-ins only add to the installed system, but by saying so we’re actually spreading a myth:

“Adding plug-ins to an Eclipse install does not affect anything that is already installed.”

This simplest way to falsify this myth is by installing two plug-ins that together produce a deadlock. Each plug-in in isolation may work just perfect, but adding the other will cause Eclipse to freeze.

No zero-risk software install

Sure, in the absence of any correctness proofs, we can never be sure that adding one more plug-in to an already complex system will not break anything. Concurrency is just one of the most cumbersome issues to get right. Other reasons exist, why installing more is not always better.

Install-time risk assement

Actually, the p2 UI already distinguishes two kinds of installs: signed and unsigned artifacts, and warns the user when unsigned content is about to be installed. This is great, because now the user can decide whether s/he accepts unsigned content or not.

But this question is just the tip of the iceberg.

Behind the scenes of p2

When you tell p2 to install a given feature a number of things can happen that a normal user will not be aware of:

- Additional required software may be pulled in, possibly from different projects / vendors / repositories

- New features may replace existing plug-ins (patch features).

- Installation may trigger touchpoint actions that modify central parts of the system

- Installed plug-ins may access non-public classes and members of existing plug-ins

- New plug-ins may hook into the Equinox framework (adaptor hooks)

- New plug-ins can weave into the bytecode of existing plug-ins.

Is this bad?

Don’t get me wrong: I believe all these ways of influencing the installation are very useful capabilities for specific goals (and Object Teams utilizes several of those). However, we may want to acknowledge that users have different goals. If you need to be 100% sure that installing a plug-in does not delete anything on your hard disk you will not be happy to learn about the touchpoint instruction “remove”, while apparently others think this a cool feature.

Touchpoint actions have no ethical value, neither good nor bad.

Negotiation over rules

Drawing the line of how a plug-in may affect the rest of the system can be done up-front, by defining rules of conduct. Thou shalt not use internal classes; thou shalt only add, never modify etc. But that would impose a one-size-fits-all regime over all plug-ins to be published, and installations that can or cannot be created from published plug-ins. This regime would never equally suite the anxious and the daring, users with highest security constraints and users loving to play around with the newest and coolest.

Better than one size would be two sizes (conservative & bleeding edge), but then we still wouldn’t meet en par with our users, with all their shades of goals (and fears). Instead of imposing rules I suggest to enter negotiation with our users. By that I meen:

- Really tell them what’s in the boxes

- Let them decide which boxes to install

Proposing: Install capabilities

I could think of a concept of install capabilities at the core of a solution:

- Each artifact may declare a set of non-standard capabilities required for installing, which could include all items from the above list (and more)

- During install a security/risk report will be presented to the user mentioning (a summary of) all capability requests

- Based on that report a user can select which requests to accept, possibly blocking risky plug-ins from installing

- The runtime should enforce that no feature/plug-in uses any install capabilities which it didn’t declare

In this context I previously coined the notion of Gradual Encapsulation including a more elaborate model of negotiation.

More specifically I filed bug 316702. Where I’d love to read some comments.

I strongly feel it’s time to talk straight to our users: adding new artifacts to an existing Eclipse install is not always purely additive. Installation can indeed modify existing parts. In an open-source community, we should tell our users what modifications are requested, what potential risks are associated with a given artifact. They are grown-up and able to decide for themselves.

New Honours, New Synergy

I’m flattered to see my name appear on one more Eclipse web-page:

Thanks to the JDT/Core team for accepting me as a new member!

Actually, I’ve been poking about the JDT source code since 2003 when we started implementing the Object Teams Development Tooling (OTDT) on top of the JDT. So it was only natural that I would stumble upon a bug in the JDT every once in a while (incl. one “greatbug” 🙂 ). In this specific situation, reporting bugs and trying to help find solutions was more than just good Eclipse citizenship: the discussions in bugzilla also helped me to better understand the JDT sources and thus it helped me developing the OTDT. And that’s what this post is about: synergy!

Personal Goals

Actually, watching the JDT/Core bug inbox provides a constant stream of cool riddles: spooky Java examples producing unexpected compiler/runtime results. Sometimes those are real fun to crack. Which compiler is right, which one isn’t? What’s the programmer trying to do? I’d say there’s no better way to test your Java knowledge 🙂

Also, watching this stream helps me stay informed, because every patch will eventually need to be merged into the Object Teams branch of the JDT/Core.

But I also have some pet projects that I’d like to actively push forward. Currently I’m thinking about these two:

- Supporting inter-procedural null analysis

- Making the compiler closer match the OSGi semantics

Inter-procedural null analysis

Naturally, the JDT/Core is a facilitator for tons of cool functionality in the IDE, but it hardly communicates directly with the user. But we have one channel, where the compiler can actually talk to the developer and give advice that’s well worth your bucks: errors and warnings. Did you know, that the compiler can immediately tell you what’s wrong about this piece of code:

void foo(String in) { if (in == null) new IllegalArgumentException("Null is not allowed here"); ... // real work done here

(Yep, that was bug 236385, see the New&Noteworthy on how to enable).

Many Java developers share the experience that one of the most frequent problems is also one of the most mundane: NPE. Those, who are aware of this easily produce the opposite problem: cluttering there code with meaningless null-checks (many of which have no better action than the above IllegalArgumentException which isn’t much better than throwing the NPE in the first place). The point is, we’d want to know which values can actually be null (and why!) so we write only the necessary null-checks and for those we should think really hard what would be a suitable action, right? (“This shouldn’t happen” is not an answer!).

Thus I’m happy I could get involved in some improvements of the compiler’s flow analysis. I also experienced that null-analysis combined with aggressive optimization is a delicate issue – remember SDK 3.7M2a? Sorry about that, but to my justification I might add that bug 325755 has always been dormantly present, I only woke’em up. I must admit for those 105 minutes my heart beat was a bit above average 😉 .

Anyway, the current null analysis is still fundamentally limited: it knows nothing about nullness of method arguments nor method call results. So it would be a real cool enhancement if we could feed such information into the compiler. Fortunately, the theory of how to do this (you may either think of improved type systems or of design by contract) is a well-explored subject. Actually, bug 186342 already has some patches in this direction. Since discussing in bugs with long history is a bit tedious I created this wiki page. Sadly, it’s not a technical difficulty that’s keeping us from immediately releasing that patch, but a stalled standardization process 😦 .

However, as outlined in the wiki, here comes another synergy: in case we can’t find a solution that will be sufficiently compatible with future standards, I can easily create an early-adopters release, so sorting out the details of implementation and usage can be done in parallel with the standardization process. How that? I’d simply ship the compiler enhancement as an OT/Equinox-enabled plug-in. This would allow us to ship the compiler enhancement as separate plug-in ready to be tried by early adopters.

OSGi-aware compiler

Some of you may have noticed (here, or here, or here, or here or one of the many duplicates) that the compiler’s concept of a (single, linear) classpath is not a good match for compiling OSGi code. I will soon write another wiki page with my current thinkings about that issue. Stay tuned …

Comments?

For both issues I’d appreciate any comments / feedback. I’ll be checking updates of the bugs I listed, or the wiki page or … talk to me during ESE! Let’s move something together.

See you all in Ludwigsburg!

Stephan

Get for free what Coin doesn’t buy you

Ralf Ebert recently blogged about how he extended Java to support a short-hand notation for throwing exceptions, like:

throw "this is wrong";

It’s exactly the kind of enhancement you’d expect from Project Coin, but neither do they have it, nor would you want to wait until they release a solution.

At this point I gave it a few minutes, adapted Ralf’s code, applied Olivier’s suggestion wrapped it in a little plugin et voilà:

Install

Use this p2 repository, check two features…

…install and restart, and you’re ready to use your “Medal” IDE:

So that’s basically the same as what Ralf already showed except:

It’s a module!

In contrast to Ralf’s patch of the JDT/Core my little plugin can be easily deployed and installed into any Eclipse (≥3.6.0). It just requires another small feature called “Object Teams Equinox Integration” or “OT/Equinox” for short.

So we’re all going to use our private own ”’dialects of Java?”’ Hm, firstly, once compiled this is of course plain Java, you wouldn’t be able to tell that the sources looked “funny”.

And: here’s the Boss Key: when somebody sniffs about your monitor, a single click will make Eclipse behave “normal”:

In other words, you can ”’dynamically enable/disable”’ this feature at runtime. The OT/Equinox Monitor view in the snapshot shows all known Team instances in the currently running IDE, and the little check boxes simply send activate() / deactivate() messages to the selected instance.

I coined the name Medal as our own playground for Java extensions of this kind. Feel free to suggest/contribute more!

Implementation

For a quick introduction on how to setup an OT/Equinox project in Eclipse I’d suggest our Quick Start (let me know if anything is unclear). For this particular case the key is in defining one little extension:

which the package explorer will render as:

Drilling into the Team class ThrowString you’ll see:

The Team class contains two Role classes:

- Role DontReport binds to class

ProblemReporter(not shown in the Outline), intercepts calls toProblemReporter.cannotThrowTypeand if the type in question is String, simply ignores the “error” - Role Generate binds to class

ThrowStatementto make sure the correct bytecodes for creating aRuntimeExceptionare generated

Also, in the Outline you see both kinds of method bindings that are supported by Object Teams:

getExceptionType/setExceptionTypeare getter/setter definitions for fieldThrowStatement.exceptionType(callout-to-field in OT/J jargon)- Things like “

adjustType <- after resolve” establish method call interception (callin bindings in OT/J jargon – the “after” is symbolized by the specific icon)

The actual implementation is really simple, like (full listing of the first role):

protected class DontReport playedBy ProblemReporter { cannotThrowType <- replace cannotThrowType; @SuppressWarnings("basecall") callin void cannotThrowType(ASTNode exception, TypeBinding exceptionType) { if (exceptionType.id != TypeIds.T_JavaLangString) // do the actual reporting only if it's not a string base.cannotThrowType(exception, exceptionType); } }

The base-call (base.cannotThrowType) delegates back to the original method, but only if the exception type is not String. The @SuppressWarnings annotation documents that not all control flows through this method will issue a base-call, a decision that deserves a second thought as it means the base plugin (here JDT/Core) does not perform its task fully as usual.

Intercepting resolve has the purpose of replacing type String with RuntimeException so that other parts of the Compiler and the IDE see a well-typed structure.

The method that performs the actual work is generateCode. Since this method is essentially based on the original implementation, the best way to see the difference is (select either the callin method or the callin binding):

which gives you this compare editor:

This neatly shows the two code blocks I inserted, one for creating the RuntimeException instance, the other for invoking its constructor. Or, if you just want to read the full role method:

/* This method is partly copied from the base method. */ @SuppressWarnings({"basecall", "inferredcallout"}) callin void generateCode(BlockScope currentScope, CodeStream codeStream) { if ((this.bits & ASTNode.IsReachable) == 0) return; int pc = codeStream.position; // create a new RuntimeException: ReferenceBinding runtimeExceptionBinding = (ReferenceBinding) this.exceptionType; codeStream.new_(runtimeExceptionBinding); codeStream.dup(); // generate the code for the original String expression: this.exception.generateCode(currentScope, codeStream, true); // call the constructor RuntimeException(String): MethodBinding ctor = runtimeExceptionBinding.getExactConstructor(new TypeBinding[]{this.stringType}); codeStream.invoke(Opcodes.OPC_invokespecial, ctor, runtimeExceptionBinding); // throw it: codeStream.athrow(); codeStream.recordPositionsFrom(pc, this.sourceStart); }

You may also fetch the full sources of this little plug-in (plus a feature for easy deployment) to play around with and extend.

Next?

Ralf mentioned that he’d like to play with ways for also extending the syntax. For a starter on how this can be done with Object Teams I recommend my previous posts IDE for your own language embedded in Java? (part 1) and part 2.

New Refactoring for OT/J: Change Method Signature

IDE Innovation

Every now and then some folks report that the IDE they’re developing now supports this or that cool new feature. Sometimes I envy them for such progress – but more often than not I end up realizing that the OTDT already has that feature or something very similar. Is that just my personal bias (which certainly I have) or are we cheating in some way, or what?

There’s a little detail in the design of the OTDT that turns out to make such a difference as normally can only achieved by cheating: by the way how the OTDT extends and adapts the JDT it is like saying we’re starting the race not at the line saying “START” but at the other one saying “FINISH” and run on from there. While many projects define “JDT-like user experience” as their long-term goal, the OTDT basically has this since the first release. How come? The OTDT basically is the JDT, with adaptations.

There’s a fine point in the word basically. To tell the truth, every feature that the JDT supports for Java development is not automatically fully available in the OTDT for OT/J development. In fact most JDT features need adaptation to provide equal convenience for OT/J development. It’s just that all these adaptations can be brought into the system very very easily – thanks to the self-application of OT/Equinox. And now, here is actually an example of a JDT feature that lacked OT/J support – until yesterday:

Change Method Signature Refactoring

If you refactor mercilessly the “Change Method Signature” refactoring is certainly one of your friends. Add/rename/remove/reshuffle parameters of a method without (too easily) breaking existing code, cool. It knows about the connections from method invocation to method declaration and about overriding. That’s good enough for Java, but not good enough for OT/J since OT/J introduces method bindings (“callout” and “callin”) that create a wiring between methods of different objects. Obviously, if one of the methods being wired changes its signature so must the method binding.

Technically, the JDT implementation of that refactoring bailed out when it asked a parameter/argument for its parent in the AST and found neither a method declaration nor a method invocation. The JDT refactoring does not know about OT/J method bindings, so it just failed to update those.

After a little of coding this is what happens now when you apply “Change Method Signature” on a piece of OT/J code. Assume you have a plain Java class:

public class BaseClass { public void bm(int i, boolean b) { } void other() { bm(3, false); } }

and a TeamClass whose contained RoleClass is bound to BaseClass:

public team class TeamClass { protected class RoleClass playedBy BaseClass { void rm(int i2, boolean b2) <- after void bm(int i, boolean b); private void rm(int i2, boolean b2) { System.out.println((b2?i2:-i2)); } } }

Here the right-hand side of the callin binding in line 4 refers to the normal method bm(int,boolean) defined in BaseClass.

What happens if you start messing around with the signature of bm?

Like:

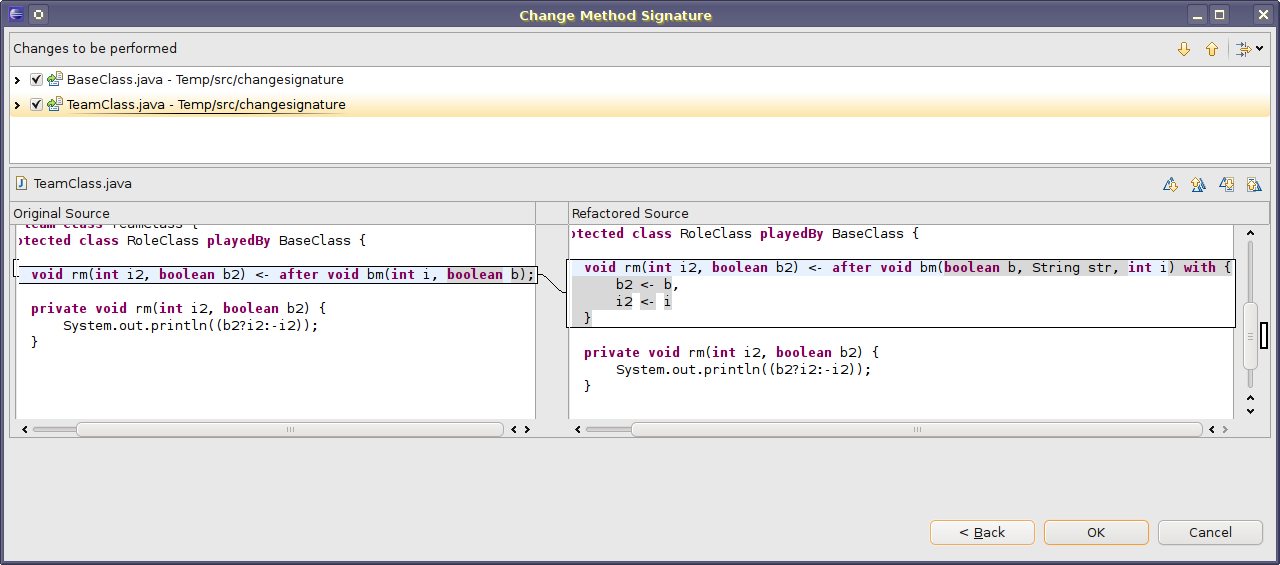

I.e., we are adding a parameter str and also change the order of parameters (from i,b to b,str,i). I don’t have to tell you what this refactoring does to BaseClass, but here’s the preview of those changes affecting TeamClass:

(sorry the screenshot is a bit wide, you may have to click to really see).

The preview shows that the refactoring will do three things:

- Update the right-hand side of the method binding.

- Add a parameter mapping (the part starting with “

with“) to ensure that the role methodrmreceives the arguments it needs, the way it needs them. - Do not update any part of the role implementation, because that’s what the parameter mapping is for: shield the role implementation from any outside changes.

Some words on these parameter mappings: each element like “b2 <- b” feeds a value from the right-hand side (representing things at the baseclass side) into the parameter at the left-hand side (representing the role). The list of parameter mappings is not ordered, which means further swapping of base side parameters requires no further action. And indeed the current implementation of the refactoring does not attempt to adjust an existing parameter mapping (which might be a quite complex task). If adjustments are required which the refactoring cannot perform automatically, it will inform the user that perhaps a parameter mapping may need manual adjustment.

The refactoring applies no AI to guess what the intended solution should look like, but it performs a number of obvious adaptations and gives note when these adaptations may not suffice and manual cleanup may be needed.

Implementation

Those who have read previous posts may (almost) know the kind of statistics that follows:

- 299 LOC implementation

- 315 LOC testcode

- 170 LOC testdata

I should really show one of these OT/J based implementation, one of these days. For this post I will just give you an Outline, literally:

Role class Processor is bound to the JDT’s ChangeSignatureProcessor, and the nested roles OccurrenceUpdate and MethodSpecUpdate are bound to two inner classes of ChangeSignatureProcessor, which shows how even class nesting at the base level can be mapped to the team & role level. Instances of the innermost roles will only ever come into being, if a Processor role has detected that it needs to work in order to handle OT/J specific code. Inside each role – apart from regular fields and methods – you see those green arrow-things, denoting method bindings between a role and its base. The highlighted binding to createOccurrenceUpdate is actually the initial entry into the logic of this module. Further down you see how the base behavior reshuffleElements is intercepted to additionally add parameter mappings if needed (and yes, I’m hiding the details of role MethodSpecUpdate, but there are no secrets inside, I just more methods and more method bindings).

Voilà, we indeed have a new refactoring for OT/J. I’ve been planning this one for a while but in the end it took me little more than a day 🙂

Object Teams rocks :)

During the last week or so I modernized a part of the Object Teams Development Tooling (OTDT) that had been developed some 5 years ago: the type hierarchy for OT/J. I’ll mention the basic requirements for this engine in a minute. While most of the OTDT succeeds in reusing functionality from the JDT, the type hierarchy was implemented as a full replacement of the original. This is a pretty involved little machine, which took weeks and months to get right. It provides its logic to components like Refactoring and the Type Hierarchy View.

On the one hand this engine worked well for most uses, but over so many years we did not succeed to solve two remaining issues:

- Give a faithful implementation for

getSuperclass() - This is tricky because a role class in OT/J can have more than one superclass. Failing to implement this method we could not support the “traditional” mode of the hierarchy view that shows both the tree of subclasses of a focus type plus the path of superclasses up to

Object(this upwards path relies ongetSuperclass). - Support region based hierarchies

- Here the type hierarchy is not only computed for supertypes and subtypes of one given focus type, but full inheritance structure is computed for a set of types (a “region”). This strategy is used by many JDT Refactorings, and thus we could not precisely adapt some of these for OT/J.

In analyzing this situation I had to weigh these issues:

- In its current state the implementation strategy was a show stopper for one mode of the type hierarchy view and for precise analysis in several refactorings.

- Adding a region based variant of our hierarchy implementation would mean to re-invent lots of stuff, both from the JDT and from our own development.

- All this seemed to suggest to discard our own implementation and start over from scratch.

Object Teams to the rescue: Let’s re-build Rome in ten days.

As mentioned in my previous post, the strength of Object Teams lies in building layers: each module sits in one layer, and integration between layers is given by declarative bindings:

Applying this to the issue at hand we now actually have three layers with quite different structures:

Java Model

The bottom layer is the Java model that implements the containment tree of Jave elements: A project contains source folders, containing packages, containing compilation units, containing types containing members. In this model each Java type is represented by an instance of IType

Java Type Hierarchy

This engine from the JDT maintains the graph of inheritance information as a second way for navigating between ITypes. Interestingly, this module pretty closely simulates what Object Teams does natively, I may come back to that in a later post.

Object Teams Type Hierarchy

As an extension of Java, OT/J naturally supports the normal inheritance using extends, but there is a second way how an inheritance link can be established: based on inheritance of the enclosing team:

team class EcoSystem { protected class Project { } protected class IDEProject extends Project { } } team class Eclipse extends EcoSystem { @Override protected class Project { } @Override protected class IDEProject extends Project { } }

Here, Eclipse.Project is an implicit subclass of EcoSystem.Project simply because Eclipse is a subclass of EcoSystem and both classes have the same simple name Project. I will not go into motivation and consequences of this language design (that’ll be a separate post — which I actually promised many weeks ago).

Looking at the technical challenge we see that the implicit inheritance in OT/J adds a third layer, in which classes are connected in yet another graph.

Three Layers — Three Graphs

Looking at the IType representation of Eclipse.IDEProject we can ask three questions:

| Question | Code | Answer |

|---|---|---|

| What is your containing element? | type.getParent() |

Eclipse |

| What is your superclass? | hierarchy.getSuperclass(type) |

Eclipse.Project |

| What is your implicit superclass? | ?? | EcoSystem.Project |

Each question is implemented in a different layer of the system. Things get a little complicated when asking a type for all its super types, which requires to collect the answers from both the JDT hierarchy layer and the OT hierarchy. Yet, the most tricky part was giving an implementation for getSuperclass().

An "Impossible" Requirement

There is a hidden assumption behind method getSuperclass() which is pervasive in large parts of the implementation, especially most refactorings:

When searching all methods that a type inherits from other types, looping over

getSuperclass()until you reachObjectwill bring you to all the classes you need to consider, like so:IType currentType = /* some init */; while (currentType != null) { findMethods(currentType, /*some more arguments*/); currentType = hierarchy.getSuperclass(currentType); }

There are lots and lots of places implemented using this pattern. But, how do you do that if a class has multiple superclasses?? I cannot change all the existing code to use recursive functions rather than this single loop!

Looking at Eclipse.IDEProject we have two direct superclasses: Eclipse.Project (normal inheritance, “extends”) and EcoSystem.IDEProject (OT/J implicit inheritance), which cannot both be answered by a single call to getSuperclass(). The programming language theory behind OT/J, however, has a simple answer: linearization. Thus, the superclasses of Eclipse.IDEProject are:

- Eclipse.IDEProject → EcoSystem.IDEProject → Eclipse.Project → EcoSystem.Project

… in this order. And this is how this shall be rendered in the hierarchy view:

The final callenge: what should this query answer:

getSuperclass(ecoSystemIDEProject);

According to the above linearization we should answer: Eclipse.Project, but only if we are in the context of the superclass chain of Eclipse.IDEProject. Talking directly to EcoSystem.IDEProject we should get EcoSystem.Project! In other words: the function needs to be smarter than what it can derive from its arguments.

Layer Instances for each Situation

Let’s go back to the layer thing:

At the bottom you see the Java model (as rendered by the package explorer). In the top layer you see the OT/J type hierarchy (lets forget about the middle layer for now). Two essential concepts can be illustrated by this picture:

- Each layer is populated with objects and while each layer owns its objects, those objects connected with a red line between layers are almost the same, they represent the same concept.

- The top layer can be instantiated multiple times: for each focus type you create a new OT/J hierarchy instance, populated with a fresh set of objects.

It is the second bullet that resolves the “impossible” requirement: the objects within each layer instance are wired differently, implementing different traversals. Depending on the focus type, each layer may answer the getSuperclass(type) question differently, even for the same argument.

The first bullet answers how these layers are integrated into a system: Conceptually we are speaking about the same Java model elements (IType), but we superimpose different graph structure depending on our current context.

but in each layer these objects are connected in a specific way as suites for the task at hand.

Inside the hierarchy layer, we actually do not handle IType instances directly, but we have  roles that represent one given

roles that represent one given IType each. Those roles contain all the inheritance links needed for answering the various questions about inheritance relations (direct/indirect, explicit/implicit, super/sub).

A cool thing about Object Teams is, that having different sets of objects in different layers (![]() teams) doesn’t make the program more complex, because I can pass an object from one layer into methods of another layer and the language will quite automagically translate into the object that sits at the other end of that red line in the picture above. Although each layer has its own view, they “know” that they are basically talking about the same stuff (sounds like real life, doesn’t it?).

teams) doesn’t make the program more complex, because I can pass an object from one layer into methods of another layer and the language will quite automagically translate into the object that sits at the other end of that red line in the picture above. Although each layer has its own view, they “know” that they are basically talking about the same stuff (sounds like real life, doesn’t it?).

Summing up

OK, I haven’t shown any code of the new hierarchy implementation (yet), but here’s a sketch of before-vs.-after:

- Code Size

- The new implementation of the hierarchy engine has about half the size of the previous implementation (because it need not repeat anything that’s already implemented in the Java hierarchy).

- Integration

- The previous implementation had to be individually integrated into each client module that normally uses Java hierarchies and then should use an OT hierarchy instead. After the re-implementation, the OT hierarchy is transparently integrated such that no clients need to be adapted (accounting for even more code that could be discarded).

- Linearization

- Using the new implementation,

getSuperclass()answers the correct, context sensitive linearization, as shown in the screenshot above, which the old implementation failed to solve. - Region based hierarchies

- The old implementation was incompatible with building a hierarchy for a region. For the new implementation it doesn’t matter whether it’s built for a single focus type or for a region, so, many clients now work better without any additional efforts.

The previous implementation only scratched at the surface – literally worked around the actual issue (which is: the Java type hierarchy is not aware of OT/J implicit inheritance). The new solution solves the issue right at its core: the new team OTTypeHierarchies assists the original type hierarchy (such that its answers indeed respect OT/J’s implicit inheritance). By performing this adaptation at the issue’s core, the solution automatically radiates to all clients. So I expect that investing a few days in re-writing the implementation will pay off in no time. Especially, improving the (already strong) refactoring support for OT/J is now much, much easier.

Moving your solution into the core could easily result in a design were a few bloated and tangled core modules do all the work, mocking the very idea of modularity. This can be avoided by a technology that is based on some concept of perspectives and self-contained layers, as supported by teams in OT/J.

Need I say, how much fun this re-write was? 🙂