Try to keep config in the environment.

cat << EOF > /etc/default/marathon

MARATHON_MASTER=zk://127.0.0.1:2181/mesos

MARATHON_ZK=zk://127.0.0.1:2181/marathon

EOF

Remember to replace 127.0.0.1:2181 with proper Zookeeper location.

Try to keep config in the environment.

cat << EOF > /etc/default/marathon

MARATHON_MASTER=zk://127.0.0.1:2181/mesos

MARATHON_ZK=zk://127.0.0.1:2181/marathon

EOF

Remember to replace 127.0.0.1:2181 with proper Zookeeper location.

# post_loc.txt contains the json you want to post # -p means to POST it # -H adds an Auth header (could be Basic or Token) # -T sets the Content-Type # -c is concurrent clients # -n is the number of requests to run in the test ab -p post_loc.txt -T application/json -H 'Authorization: Token abcd1234' -c 10 -n 2000 http://example.com/api/v1/locations/

While configuring with apache and perl cgi scripts, don’t know why index.cgi/index.pl are displayed as plain text instead of executing them.

When browser is printing code of script that means it’s unable to find the application to run the script. Below two lines should be your first steps to solve this. AddHandler will make sure files ending with .cgi and .pl to be treated as cgi scripts. And +ExecCGI option will allow to execute the script. Also make sure your script is pointing to correct perl binary location.

AddHandler cgi-script .cgi .pl Options FollowSymLinks +ExecCGI

Also There are some mistakes/misconfiguration points in your httpd.conf

ScriptAlias /cgi-bin/ “D:\webserver\cgi-bin”

httpd.conf. You should replace your <Directory "D:\webserver"> part with below.<Directory "D:\webserver\cgi-bin" /> AddHandler cgi-script .cgi .pl Options FollowSymLinks +ExecCGI AllowOverride None </Directory>

perl test.cgi

cgi-bin directory and your cgi script. And also you can create directory or file with write permissions. If not create a cgi-bindirectory at some other place where you can have write permissions and provide rather its path in alias and directory attributes in httpd.conf instead.Also this link should help you.

(Extra comment, not by the original answerer: You may also need to enable the cgi module. For me, the final step to getting cgi to work on a fresh install of Apache 2 was sudo a2enmod cgi. Before I did that, the website simply showed me the contents of the script.)

sudo a2enmod cgi

Wazuh helps you to gain deeper security visibility into your infrastructure by monitoring hosts at an operating system and application level. This solution, based on lightweight multi-platform agents, provides the following capabilities:

Wazuh monitors the file system, identifying changes in content, permissions, ownership, and attributes of files that you need to keep an eye on.

Agents scan the system looking for malware, rootkits or suspicious anomalies. They can detect hidden files, cloaked processes or unregistered network listeners, as well as inconsistencies in system call responses.

Wazuh agents read operating system and application logs, and securely forward them to a central manager for rule-based analysis and storage. The Wazuh rules help make you aware of application or system errors, misconfigurations, attempted and/or successful malicious activities, policy violations and a variety of other security and operational issues.

Wazuh monitors configuration files to ensure they are compliant with your security policies, standards and/or hardening guides. Agents perform periodic scans to detect applications that are known to be vulnerable, unpatched, or insecurely configured.

This diverse set of capabilities is provided by integrating OSSEC, OpenSCAP and Elastic Stack into a unified solution and simplifying their configuration and management.

Execute the following commands to install and configure Wazuh:

apt-get update

apt-get install curl apt-transport-https lsb-release gnupg2

curl -s https://packages.wazuh.com/key/GPG-KEY-WAZUH | apt-key add –

echo “deb https://packages.wazuh.com/3.x/apt/ stable main” | tee -a /etc/apt/sources.list.d/wazuh.list

apt-get update

apt-get install wazuh-manager

systemctl status wazuh-manager

curl -sL https://deb.nodesource.com/setup_8.x | bash –

apt-get install gcc g++ make

apt-get install -y nodejs

curl -sL https://dl.yarnpkg.com/debian/pubkey.gpg | sudo apt-key add –

echo “deb https://dl.yarnpkg.com/debian/ stable main” | sudo tee /etc/apt/sources.list.d/yarn.list

sudo apt-get update && sudo apt-get install yarn

apt-get install nodejs

apt-get install wazuh-api

systemctl status wazuh-api

sed -i “s/^deb/#deb/” /etc/apt/sources.list.d/wazuh.list

apt-get update

curl -s https://artifacts.elastic.co/GPG-KEY-elasticsearch | apt-key add –

echo “deb https://artifacts.elastic.co/packages/6.x/apt stable main” | tee /etc/apt/sources.list.d/elastic-6.x.list

apt-get update

apt-get install filebeat

curl -so /etc/filebeat/filebeat.yml https://raw.githubusercontent.com/wazuh/wazuh/v3.9.3/extensions/filebeat/6.x/filebeat.yml

Edit the file

/etc/filebeat/filebeat.ymland replaceYOUR_ELASTIC_SERVER_IPwith the IP address or the hostname of the Logstash server.

apt search elasticsearch

apt-get install elasticsearch

systemctl daemon-reload

systemctl enable elasticsearch.service

systemctl start elasticsearch.service

curl https://raw.githubusercontent.com/wazuh/wazuh/v3.9.3/extensions/elasticsearch/6.x/wazuh-template.json | curl -X PUT “http://localhost:9200/_template/wazuh” -H ‘Content-Type: application/json’ -d @-

curl -X PUT “http://localhost:9200/*/_settings?pretty” -H ‘Content-Type: application/json’ -d’

“settings”: {

“number_of_replicas” : 0

}

‘

sed -i ‘s/#bootstrap.memory_lock: true/bootstrap.memory_lock: true/’ /etc/elasticsearch/elasticsearch.yml

sed -i ‘s/^-Xms.*/-Xms12g/;s/^-Xmx.*/-Xmx12g/’ /etc/elasticsearch/jvm.options

mkdir -p /etc/systemd/system/elasticsearch.service.d/

echo -e “[Service]\nLimitMEMLOCK=infinity” > /etc/systemd/system/elasticsearch.service.d/elasticsearch.conf

systemctl daemon-reload

systemctl restart elasticsearch

apt-get install logstash

curl -so /etc/logstash/conf.d/01-wazuh.conf https://raw.githubusercontent.com/wazuh/wazuh/master/extensions/logstash/6.x/01-wazuh-local.conf

systemctl daemon-reload

systemctl enable logstash.service

systemctl start logstash.service

systemctl status filebeat

systemctl start filebeat

apt-get install kibana

export NODE_OPTIONS=”–max-old-space-size=3072″

sudo -u kibana /usr/share/kibana/bin/kibana-plugin install https://packages.wazuh.com/wazuhapp/wazuhapp-3.9.3_6.8.1.zip

Kibana will only listen on the loopback interface (localhost) by default. To set up Kibana to listen on all interfaces, edit the file

/etc/kibana/kibana.ymluncommenting the settingserver.host. Change the value to:

server.host: “0.0.0.0”

systemctl enable kibana.service

systemctl start kibana.service

cd /var/ossec/api/configuration/auth

Create a username and password for Wazuh API. When prompted, enter the password:

node htpasswd -c user admin

systemctl restart wazuh-api

Then in the agent machine execute the following commands:

- apt-get install curl apt-transport-https lsb-release gnupg2

- curl -s https://packages.wazuh.com/key/GPG-KEY-WAZUH | apt-key add –

- echo “deb https://packages.wazuh.com/3.x/apt/ stable main” | tee /etc/apt/sources.list.d/wazuh.list

- apt-get update

- You can automate the agent registration and configuration using variables. It is necessary to define at least the variable

WAZUH_MANAGER_IP. The agent will use this value to register and it will be the assigned manager for forwarding events.

WAZUH_MANAGER_IP=“10.0.0.2” apt-get install wazuh-agent- sed -i “s/^deb/#deb/” /etc/apt/sources.list.d/wazuh.list

- apt-get update

In this section, we’ll register the Wazuh API (installed on the Wazuh server) into the Wazuh App in Kibana:

Add new API.

setuid means set user ID upon execution. If setuid bit turned on a file, user executing that executable file gets the permissions of the individual or group that owns the file. You need to use the ls -l or find command to see setuid programs. All setuid programs displays S or s in the permission bit (owner-execute) of the ls command. Type the following command:

ls -l /usr/bin/passwd |

Sample outputs:

-rwsr-xr-x 1 root root 42856 2009-07-31 19:29 /usr/bin/passwd

The following command discovers and prints any setuid files on local system:

# find / -xdev \( -perm -4000 \) -type f -print0 | xargs -0 ls -l

The s bit can be removed with the following command:

# chmod -s /path/to/file

A attacker can exploit setuid binaries using a shell script or by providing false data. Users normally should not have setuid programs installed, especially setuid to users other than themselves. For example, you should not find setuid enabled binary for root under /home/vivek/crack. These are usually Trojan Horses kind of programs.

In this example, user vivek run the command called “/usr/bin/vi /shared/financialdata.txt”, and the permission on the vi command and the file /shared/financialdata.txt are as follows:

-rwxr-xr-x 1 root root 1871960 2009-09-21 16:57 /usr/bin/vi -rw------- 1 root root 3960 2009-09-21 16:57 /shared/financialdata.txt

Vivek has permission to run /usr/bin/vi, but not permission to read /shared/financialdata.txt. So when vi attempts to read the file a “permission denied” error message will be displayed to vivek. However, if you set the SUID bit on the vi:

chmod u+s /usr/bin/vi ls -l /usr/bin/vi |

Now, when vivek runs this SUID program, the access to /shared/financialdata.txt is granted. How does it work? The UNIX system doesn’t think vivek is reading file via vi, it thinks “root” is the user and hence the access is granted.

auditd can be used for system auditing under Linux. It can log and audit setuid system call. Edit /etc/audit/audit.rules:

# vi /etc/audit/audit.rules

-a always,exit -F path=/bin/ping -F perm=x -F auid>=500 -F auid!=4294967295 -k privileged -a always,exit -F path=/bin/mount -F perm=x -F auid>=500 -F auid!=4294967295 -k privileged -a always,exit -F path=/bin/su -F perm=x -F auid>=500 -F auid!=4294967295 -k privileged -a always,exit -F path=/bin/umount -F perm=x -F auid>=500 -F auid!=4294967295 -k privileged

Run the following command to get setuid enabled binary from /bin and add them as above:

# find /bin -type f -perm -04000

Save and close the file. Restart auditd:

# service auditd restart

Use aureport command to view audit reports:

# aureport --key --summary

# ausearch --key access --raw | aureport --file --summary

# ausearch --key access --raw | aureport -x --summary

# ausearch --key access --file /bin/mount --raw | aureport --user --summary -i

In this guide, we will be configuring an Ubuntu 12.04 VPS with a standard LAMP (Linux, Apache, MySQL, PHP) installation. We assume that you have already installed these basic components and have them working in a basic configuration.

To learn how to install a LAMP stack on Ubuntu, click here.

We will be referencing the software as it is in its initial state following that tutorial.

In Ubuntu’s Apache configuration, CGI scripts are actually already configured within a specific CGI directory. This directory is empty by default.

CGI scripts can be any program that has the ability to output HTML or other objects or formats that a web browser can render.

If we go to the Apache configuration directory, and look at the modules that Apache has enabled in the mods-enabled directory, we will find a file that enables this functionality:

less /etc/apache2/mods-enabled/cgi.load

LoadModule cgi_module /usr/lib/apache2/modules/mod_cgi.so

This file contains the directive that enables the CGI module. This allows us to use this functionality in our configurations.

Although the module is loaded, it does not actually serve any script content on its own. It must be enabled within a specific web environment explicitly.

We will look at the default Apache virtual host file to see how it does this:

sudo nano /etc/apache2/sites-enabled/000-default

While we are in here, let’s set the server name to reference our domain name or IP address:

<VirutalHost *:80>

ServerName your_domain_or_IP_address

ServerAdmin your_email_address

. . .

We can see a bit down in the file the part that is applicable to CGI scripts:

ScriptAlias /cgi-bin/ /usr/lib/cgi-bin/

<Directory "/usr/lib/cgi-bin">

AllowOverride None

Options +ExecCGI -MultiViews +SymLinksIfOwnerMatch

Order allow,deny

Allow from all

</Directory>

Let’s break down what this portion of the configuration is doing.

The ScriptAlias directive gives Apache permission to execute the scripts contained in a specific directory. In this case, the directory is /usr/lib/cgi-bin/. While the second argument gives the file path to the script directory, the first argument, /cgi-bin/, provides the URL path.

This means that a script called “script.pl” located in the /usr/lib/cgi-bin directory would be executed when you access:

your_domain.com/cgi-bin/script.pl

Its output would be returned to the web browser to render a page.

The Directory container contains rules that apply to the /usr/lib/cgi-bin directory. You will notice an option that mentions CGI:

Options +ExecCGI ...

This option is actually unnecessary since we are setting up options for a directory that has already been declared a CGI directory by ScriptAlias. It does not hurt though, so you can keep it as it is.

If you wished to put CGI files in a directory outside of the ScriptAlias, you will have to add these two options to the directory section:

Options +ExecCGI

AddHandler cgi-script .pl .rb [extensions to be treated as CGI scripts]

When you are done examining the file, save and close it. If you made any changes, restart the web server:

sudo service apache2 restart

We will create a basic, trivial CGI script to show the steps necessary to get a script to execute correctly.

As we saw in the last section, the directory designated in our configuration for CGI scripts is /usr/lib/cgi-bin. This directory is not writeable by non-root users, so we will have to use sudo:

sudo nano /usr/lib/cgi-bin/test.pl

We gave the file a “.pl” extension because this will be a Perl script, but Apache will attempt to run any file within this directory and will pass it to the appropriate program based on its first line.

We will specify that the script should be interpreted by Perl by starting the script with:

#!/usr/bin/perl

Following this, the first thing that the script must output is the content-type that will be generated. This is necessary so that the web browser knows how to display the output it is given. We will print out the HTML content type, which is “text/html”, using Perl’s regular print function.

print "Content-type: text/html\n\n";

After this, we can do whatever functions or calculations are necessary to produce the text that we want on our website. In our example, we will not produce anything that wouldn’t be easier as just plain HTML, but you can see that this allows for dynamic content if your script was more complex.

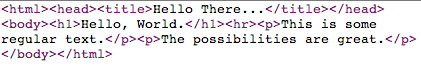

The previous two components and our actual HTML content combine to make the following script:

#!/usr/bin/perl

print "Content-type: text/html\n\n";

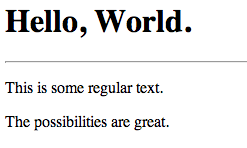

print "<html><head><title>Hello There...</title></head>";

print "<body>";

print "<h1>Hello, World.</h1><hr>";

print "<p>This is some regular text.</p>";

print "<p>The possibilities are great.</p>";

print "</body></html>";

Save and close the file.

Now, we have a file, but it isn’t marked as executable. Let’s change that:

sudo chmod 755 /usr/lib/cgi-bin/test.pl

Now, if we navigate to our domain name, followed by the CGI directory (/cgi-bin/), followed by our script name (test.pl), we should see the output of our script.

Point your browser to:

your_domain.com/cgi-bin/test.pl

You should see something that looks like this:

Not very exciting, but rendered correctly.

If we choose to view the source of the page, we will see only the arguments given to the print functions, minus the content-type header:

There are some security concerns implicit in setting a script as executable by anybody. Ideally, a script should only be able to be executed by a single, locked down user. We can set up this situation by using the suexec module.

We will actually install a modified suexec module that allows us to configure the directories in which it operates. Normally, this would not be configurable without recompiling from source.

Install the alternate module with this command:

sudo apt-get install apache2-suexec-custom

Now, we can enable the module by typing:

sudo a2enmod suexec

Next, we will create a new user that will own our script files. If we have multiple sites being served, each can have their own user and group:

sudo adduser script_user

Feel free to enter through all of the prompts (including the password prompt). This user does not need to be fleshed out.

Next, let’s create a scripts directory within this new user’s home directory:

sudo mkdir /home/script_user/scripts

Suexec requires very strict control over who can write to the directory. Let’s transfer ownership to the script_user user and change the permissions so that no one else can write to the directory:

sudo chown script_user:script_user /home/script_user/scripts

sudo chmod 755 /home/script_user/scripts

Next, let’s create a script file and copy and paste our script from above into it:

sudo -u script_user nano /home/script_user/scripts/attempt.pl

#!/usr/bin/perl

print "Content-type: text/html\n\n";

print "<html><head><title>Hello There...</title></head>";

print "<body>";

print "<h1>Hello, World.</h1><hr>";

print "<p>This is some regular text.</p>";

print "<p>The possibilities are great.</p>";

print "</body></html>";

Make it executable next. We will only let our script_user have any permissions on the file. This is what the suexec module allows us to do:

sudo chmod 700 /home/script_user/scripts/attempt.pl

Next, we will edit our Apache virtual host configuration to allow scripts to be executed by our new user.

Open the default virtual host file:

sudo nano /etc/apache2/sites-enabled/000-default

First, let’s make our CGI directory. Instead of using the ScriptAlias directive, as we did above, let’s demonstrate how to use the regular Alias directory combined with the ExecCGI option and the SetHandler directive.

Add this section:

Alias /scripts/ /home/script_user/scripts/

<Directory "/home/script_user/scripts">

Options +ExecCGI

SetHandler cgi-script

</Directory>

This allows us to access our CGI scripts by going to the “/scripts” sub-directory. To enable the suexec capabilities, add this line outside of the “Directory” section, but within the “VirtualHost” section:

SuexecUserGroup script_user script_user

Save and close the file.

We also need to specify the places that suexec will consider a valid directory. This is what our customizable version of suexec allows us to do. Open the suexec configuration file:

sudo nano /etc/apache2/suexec/www-data

At the top of this file, we just need to add the path to our scripts directory.

/home/script_user/scripts/

Save and close the file.

Now, all that’s left to do is restart the web server:

sudo service apache2 restart

If we open our browser and navigate here, we can see the results of our script:

your_domain.com/scripts/attempt.pl

Please note that with suexec configured, your normal CGI directory will not work properly, because it does not pass the rigorous tests that suexec requires. This is intended behavior to control what permissions scripts have.

Spent hours going in circles following every guide I can find on the net.

I want to have two sites running on a single apache instance, something like this – 192.168.2.8/site1 and 192.168.2.8/site2

I’ve been going round in circles, but at the moment I have two conf files in ‘sites-available (symlinked to sites-enabled)’ that look like this-

<VirtualHost *:2000>

ServerAdmin webmaster@site1.com

ServerName site1

ServerAlias site1

# Indexes + Directory Root.

DirectoryIndex index.html

DocumentRoot /home/user/site1/

# CGI Directory

ScriptAlias /cgi-bin/ /home/user/site1/cgi-bin/

Options +ExecCGI

# Logfiles

ErrorLog /home/user/site1/logs/error.log

CustomLog /home/user/site1/logs/access.log combined

</VirtualHost>

and

<VirtualHost *:3000>

ServerAdmin webmaster@site2.com

ServerName site2

ServerAlias site2

# Indexes + Directory Root.

DirectoryIndex index.html

DocumentRoot /home/user/site2/

# CGI Directory

ScriptAlias /cgi-bin/ /home/user/site2/cgi-bin/

Options +ExecCGI

# Logfiles

ErrorLog /home/user/site2/logs/error.log

CustomLog /home/user/site2/logs/access.log combined

</VirtualHost>

http.conf looks like this-

NameVirtualHost *:2000

NameVirtualHost *:3000

At the moment I’m getting this error-

[error] VirtualHost *:80 — mixing * ports and non-* ports with a NameVirtualHostaddress is not supported, proceeding with undefined results

Ports.conf looks like this – (although no guides have mentioned any need to edit this)

NameVirtualHost *:80

Listen 80

<IfModule mod_ssl.c>

# If you add NameVirtualHost *:443 here, you will also have to change

# the VirtualHost statement in /etc/apache2/sites-available/default-ssl

# to <VirtualHost *:443>

# Server Name Indication for SSL named virtual hosts is currently not

# supported by MSIE on Windows XP.

Listen 443

</IfModule>

<IfModule mod_gnutls.c>

Listen 443

</IfModule>

Can anyone give some simple instructions to get this running? Every guide I’ve found says to do it a different way, and each one leads to different errors. I’m obviously doing something wrong but have found no clear explanation of what that might be.

Just want one site accessible on port 2000 and the other accessible on port 3000 (or whatever, just picked those ports to test with).

Your question is mixing a few different concepts. You started out saying you wanted to run sites on the same server using the same domain, but in different folders. That doesn’t require any special setup. Once you get the single domain running, you just create folders under that docroot.

Based on the rest of your question, what you really want to do is run various sites on the same server with their own domain names.

The best documentation you’ll find on the topic is the virtual host documentation in the apache manual.

There are two types of virtual hosts: name-based and IP-based. Name-based allows you to use a single IP address, while IP-based requires a different IP for each site. Based on your description above, you want to use name-based virtual hosts.

The initial error you were getting was due to the fact that you were using different ports than the NameVirtualHost line. If you really want to have sites served from ports other than 80, you’ll need to have a NameVirtualHost entry for each port.

Assuming you’re starting from scratch, this is much simpler than it may seem.

The first thing you need to do is tell apache that you’re going to use name-based virtual hosts.

NameVirtualHost *:80

Now that apache knows what you want to do, you can setup your vhost definitions:

<VirtualHost *:80>

DocumentRoot "/home/user/site1/"

ServerName site1

</VirtualHost>

<VirtualHost *:80>

DocumentRoot "/home/user/site2/"

ServerName site2

</VirtualHost>

Note that you can run as many sites as you want on the same port. The ServerName being different is enough to tell Apache which vhost to use. Also, the ServerName directive is always the domain/hostname and should never include a path.

Creating CA Certificate

We use this certificate for only signing certificates that we use for the clients and our web servers. It should be kept very secure. If it is disclosed other certificates signed with this certificate will be disclosed as well.

openssl genrsa -des3 -out ca.key 4096 openssl req -new -x509 -days 365 -key ca.key -out ca.crt

Creating a Key and CSR for the Client

Creating a client certificate is the same as creating Server certificate.

openssl req -newkey rsa:2048 -nodes -keyout client.key -out client.csr

Signing the client certificate with previously created CA.

Not: Do not forget to change serial each time you sign new certificate, otherwise may get serial conflict error in the web browsers.

[root@centos7 certs]# openssl x509 -req -days 365 -in client.csr -CA ca.crt -CAkey ca.key -set_serial 01 -out client.crt Signature ok subject=/C=TR/L=Default City/O=Client Certificate/CN=Client Certificate Getting CA Private Key Enter pass phrase for ca.key:

Creating a Key and CSR for the Server(Apache Virtual Host ankara.example.com)

openssl req -newkey rsa:2048 -nodes -keyout ankara.key -out ankara.csr

Signing Server Certificate with previously created CA.

Do not forget to change serial number. As it may conflict with existing one.

openssl x509 -req -days 365 -in ankara.csr -CA ca.crt -CAkey ca.key -set_serial 02 -out ankara.crt

Apache Configuration for the Authentication with Client Certificate

This sample configuration shows how to force server to request client certificate.

<Directory /srv/ankara/www> Require all granted </Directory> <VirtualHost *:443> SSLEngine On SSLCertificateFile /etc/httpd/conf.d/certs/ankara.crt SSLCertificateKeyFile /etc/httpd/conf.d/certs/ankara.key ServerName ankara.example.com DocumentRoot /srv/ankara/www SSLVerifyClient require SSLVerifyDepth 5 SSLCACertificateFile "/etc/httpd/conf.d/certs/ca.crt" </VirtualHost>

The depth actually is the maximum number of intermediate certificate issuers, i.e. the number of CA certificates which are max allowed to be followed while verifying the client certificate. A depth of 0 means that self-signed client certificates are accepted only, the default depth of 1 means the client certificate can be self-signed or has to be signed by a CA which is directly known to the server (i.e. the CA’s certificate is under SSLCACertificatePath), etc.

Reference: https://httpd.apache.org/docs/2.4/mod/mod_ssl.html

Experimenting with Curl

Without specifying the client certificate

gokay@ankara:~/certs$ curl https://ankara.example.com -v * Rebuilt URL to: https://ankara.example.com/ * Trying 192.168.122.30... * Connected to ankara.example.com (192.168.122.30) port 443 (#0) * found 148 certificates in /etc/ssl/certs/ca-certificates.crt * found 597 certificates in /etc/ssl/certs * ALPN, offering http/1.1 * gnutls_handshake() failed: Handshake failed * Closing connection 0 curl: (35) gnutls_handshake() failed: Handshake failed

With client certificate

gokay@ankara:~/certs$ curl https://ankara.example.com --key client.key --cert client.crt --cacert ca.crt -v * Rebuilt URL to: https://ankara.example.com/ * Trying 192.168.122.30... * Connected to ankara.example.com (192.168.122.30) port 443 (#0) * found 1 certificates in ca.crt * found 597 certificates in /etc/ssl/certs * ALPN, offering http/1.1 * SSL connection using TLS1.2 / ECDHE_RSA_AES_128_GCM_SHA256 * server certificate verification OK * server certificate status verification SKIPPED * common name: ankara.example.com (matched) * server certificate expiration date OK * server certificate activation date OK * certificate public key: RSA * certificate version: #1 * subject: C=TR,L=Default City,O=Ankara LTD,CN=ankara.example.com * start date: Sun, 24 Dec 2017 10:00:20 GMT * expire date: Mon, 24 Dec 2018 10:00:20 GMT * issuer: C=TR,L=Default City,O=BlueTech CA,OU=CA,CN=BlueTech CA * compression: NULL * ALPN, server did not agree to a protocol > GET / HTTP/1.1 > Host: ankara.example.com > User-Agent: curl/7.47.0 > Accept: */* > < HTTP/1.1 200 OK < Date: Sun, 24 Dec 2017 10:21:15 GMT < Server: Apache/2.4.6 (CentOS) OpenSSL/1.0.2k-fips < Last-Modified: Sun, 24 Dec 2017 10:19:51 GMT < ETag: "3b-5611363e4a8e0" < Accept-Ranges: bytes < Content-Length: 59 < Content-Type: text/html; charset=UTF-8 < <h1>My Secure Page Ankara</h1> <h2>ankara.example.com</h2> * Connection #0 to host ankara.example.com left intact

When I restart httpd, I get the following error. What am I missing?

[root@localhost ~]# service httpd restart

Stopping httpd: [ OK ]

Starting httpd: Syntax error on line 22 of /etc/httpd/conf.d/sites.conf:

Invalid command 'SSLEngine', perhaps misspelled or defined by a module not included in the server configuration

I have installed mod_ssl using yum install mod_ssl openssh

Package 1:mod_ssl-2.2.15-15.el6.centos.x86_64 already installed and latest version

Package openssh-5.3p1-70.el6_2.2.x86_64 already installed and latest version

My sites.conf looks like this

<VirtualHost *:80>

# ServerName shop.itmanx.com

ServerAdmin webmaster@itmanx.com

DocumentRoot /var/www/html/magento

<Directory /var/www/html>

Options -Indexes

AllowOverride All

</Directory>

ErrorLog logs/shop-error.log

CustomLog logs/shop-access.log

</VirtualHost>

<VirtualHost *:443>

ServerName secure.itmanx.com

ServerAdmin webmaster@itmanx.com

SSLEngine on

SSLCertificateFile /etc/httpd/ssl/secure.itmanx.com/server.crt

SSLCertificateKeyFile /etc/httpd/ssl/secure.itmanx.com/server.key

SSLCertificateChainFile /etc/httpd/ssl/secure.itmanx.com/chain.crt

DocumentRoot /var/www/html/magento

<Directory /var/www/html>

Options -Indexes

AllowOverride All

</Directory>

ErrorLog logs/shop-ssl-error.log

CustomLog logs/shop-ssl-access.log

</VirtualHost>Probably you do not load the ssl module. You should have a LoadModule directive somewhere in your apache configuration files.

Something like:

LoadModule ssl_module /usr/lib64/apache2-prefork/mod_ssl.so

Usually apache configuration template has (on any distribution) a file called (something like) loadmodule.conf in which you should find a LoadModule directive for each module you load into apache at server start.

On CentOS 7 installing the package “mod_ssl” and restarting the apache server worked for me:

yum install mod_ssl

systemctl restart httpdOn many systems (Ubuntu, Suse, Debian, …) run the following command to enable Apache’s SSL mod:

sudo a2enmod sslYou’ve probably heard of HTTP (HyperText Transfer Protocol), which is the communications protocol used by most Internet services. Broadly speaking, CGI programs receive HTTP requests, and return HTTP responses. An HTTP response header must include the status and the content-type. CGI (the interface) makes this easy.

We could hardcode a Perl script to return an HTTP response header and HTML in the body:

#!/usr/bin/env perl

use strict;

use warnings;

print <<'END';

Status: 200

Content-type: text/html

<!doctype html>

<html> HTML Goes Here </html>

ENDBut CGI.pm can handle the header for us:

#!/usr/bin/env perl

use strict;

use warnings;

use CGI;

my $cgi = CGI->new();

print $cgi->header;

print <<'END';

<!doctype html>

<html> HTML Goes Here </html>

ENDOf course, you don’t have to just send HTML text.

#!/usr/bin/env perl

use strict;

use warnings;

use CGI;

my $cgi = CGI->new();

print $cgi->header( -type => 'text/plain' );

print <<'END';

This is now text

ENDBut that is not the limit, by far. The content-type is a Multipurpose Internet Mail Extension (MIME) type, and it determines how the browser handles the message once it returns. The above example treats the “This is now text” message as text, and displays it as such. If the content-type was “text/html”, it would be parsed for HTML like a web page. If it was “application/json”, it might be displayed like text, or formatted into a browsable form, depending on your browser or extensions. If it was “application/vnd.ms-excel” or even “text/csv”, the browser would likely open in in Excel or another spreadsheet program, or possibly directly into a gene sequencer, like happens to those I generate at work.

The first way to pass data is with the query string, (the portion of a URI beginning with ?), which you see in URLs like https://example.com/?foo=bar. This uses the “GET” request method, and becomes available to the program as $ENV->{QUERY_STRING}, which in this case is foo=bar (CGI programs receive their arguments as environment variables). But CGI provides the param method which parses the query string into key value pairs, so you can work with them like a hash:

#!/usr/bin/perl

use strict;

use warnings;

use CGI;

my $cgi = CGI->new;

my %param = map { $_ => scalar $cgi->param($_) } $cgi->param() ;

print $cgi->header( -type => 'text/plain' );

print qq{PARAM:\N};

for my $k ( sort keys %param ) {

print join ": ", $k, $param{$k};

print "\n";

}

# PARAM:

# foo: barSo, now, let’s make a web page like this:

<!DOCTYPE html>

<html>

<head>

</head>

<body>

<form method="POST" action="/url/of/simple.cgi">

<input type="text" name="foo" value="bar">

<input type="submit">

</form>

</body>

</html>And click submit. The browser will send an HTTP “POST” request, with the form input as key value pairs in the request body. CGI handles this and places the data in $cgi->param, just like with “GET”. Only, with “POST” the size of input can be much larger (URL’s are generally limited to 2048 bytes by browsers).

Let’s make that form above, using the HTML-generation techniques that come with CGI.

my $output;

$output .= $cgi->start_form(

-method => "post",

-action => "/path/to/simple.cgi"

);

print $cgi->textfield( -name => 'foo', -value => 'bar' );

print $cgi->submit;

print $cgi->end_form;The problem with this, is the code to generate HTML with CGI can get very long and unreadable. The maintainers of CGI agree, which is why this is at the top of the documentation for CGI.pm:

All HTML generation functions within CGI.pm are no longer being maintained. […] The rationale for this is that the HTML generation functions of CGI.pm are an obfuscation at best and a maintenance nightmare at worst. You should be using a template engine for better separation of concerns. See CGI::Alternatives for an example of using CGI.pm with the Template::Toolkit module.

Using Template Toolkit, that form might look like:

#!/usr/bin/perl

use strict;

use warnings;

use CGI;

use Template;

my $cgi = CGI->new;

my $template = Template->new();

my $input = join "\n", <DATA>;

my $data = { action => '/url/of/program'} ;

print $cgi->header;

$template->process(\$input,$data)

|| die "Template process failed", $template->error();

__DATA__

<form method="POST" action="[% action %]">

<input type="text" name="foo" value="bar">

<input type="submit">

</form>I use Template Toolkit for all my server-side web work. It’s also the default in many of Perl’s web frameworks.

To use CGI, your web server should have mod_cgi installed. Once installed, you will have to to configure your server to execute CGI programs.

The first way is to have cgi-bin directories where every file gets executed instead of transferred.

<Directory "/home/*/www/cgi-bin">

Options ExecCGI

SetHandler cgi-script

</Directory>

The other is to allow CGI to be enabled per directory, with a configuration that looks like this:

<Directory "/home/*/www">

Options +ExecCGI

AddHandler cgi-script .cgi

</Directory>

And then add a .htaccess file in each directory that looks like this:

AddHandler cgi-script .cgi

Options +ExecCGI

So that foo.pl will transfer but foo.cgi will run, even if both are executable.

In May 2013, Ricardo Signes, then Perl5 Pumpking, sent this to the Perl5 Porters list:

I think it’s time to seriously consider removing CGI.pm from the core distribution. It is no longer what I’d point anyone at for writing any sort of web code. It is in the core, as far as I know, because once it was the state of the art, and a major reason for many people to use the language. I don’t think either is true now.

It was marked deprecated with 5.20 and removed from Core with 5.22. This is not catastrophic; it is still available in CPAN, so you would have to install it, or have your administrator install it, depending on your circumstances.

So, why did CGI drop from “state of the art” to discouraged by its own maintainers?

There are two big issues with CGI: speed and complexity. Every HTTP request triggers the forking of a new process on the web server, which is costly for server resources. A more efficient and faster way is to use a multi-process daemon which does its forking on startup and maintains a pool of processes to handle requests.

CGI isn’t good at managing the complexity of larger web applications: it has no MVC architecture to help developers separate concerns. This tends to lead to hard-to-maintain programs.

The rise of web frameworks such as Ruby on Rails, and the application servers they run on, have done much to solve both problems. There are many web frameworks written in Perl; among the most popular are Catalyst, Dancer, and Mojolicious.

CGI also contains a security vulnerability which must be coded around to avoid parameter injection.