Implement parallel scatter reductions for CPU#36447

Implement parallel scatter reductions for CPU#36447v0dro wants to merge 63 commits intopytorch:masterfrom

Conversation

💊 CI failures summary and remediationsAs of commit 4b15a86 (more details on the Dr. CI page):

Extra GitHub checks: 1 failed

ci.pytorch.org: 1 failedThis comment was automatically generated by Dr. CI (expand for details).Follow this link to opt-out of these comments for your Pull Requests.Please report bugs/suggestions on the GitHub issue tracker or post in the (internal) Dr. CI Users group. This comment has been revised 186 times. |

|

@nikitaved can you review this? Also I'm getting a failure for XLA is that OK? |

|

Dispatch needlessly compiles for all the dtypes, not just for floating point types. Fixing it however would require large copypaste, so in the interest of code clarity (but not binary size :-) ) I'm inclined to let it go as is, after you try @nikitaved's suggestion. Thoughts? If you come up with a clever way to avoid extra dispatch without copy paste, that would be awesome to, but if not, that's not a blocker. |

|

I have introduced #include <unordered_map>

#include <functional>

#include <iostream>

#include <chrono>

class F {

public:

constexpr void operator() (int & a, int & b) const {

a += b;

}

};

int main(int argc, char* argv[]) {

F fun;

int a = atoi(argv[1]);

int b = atoi(argv[2]);

std::chrono::time_point<std::chrono::system_clock> start, stop;

auto time = 0.0;

using binary_t = std::function<void(int&, int&)>;

std::unordered_map<int, binary_t> funcs;

funcs[0] = fun;

start = std::chrono::system_clock::now();

for (long long i = 0; i < 10000000; ++i) {

funcs[0](a, b);

}

stop = std::chrono::system_clock::now();

std::cout << "time with unordered_map: " << std::chrono::duration_cast<std::chrono::nanoseconds>(stop - start).count() << std::endl;

std::cout << "a: " << a << std::endl;

a = 1;

start = std::chrono::system_clock::now();

for (long long i = 0; i < 10000000; ++i) {

fun(a, b);

}

stop = std::chrono::system_clock::now();

std::cout << "time without map: " << std::chrono::duration_cast<std::chrono::nanoseconds>(stop - start).count() << std::endl;

std::cout << "a: " << a << std::endl;

}Results: |

|

New benchmark: |

|

Is this ready? If so, can you please rebase so that we can get signal from CI, right now too many tests are failing. |

|

CI errors are real. |

|

@ngimel errors are fixed. Could you please have a look? The windows failures seem unrelated. |

|

YEah, windows failures are unrelated, but can you please rebase so that we can get a signal from windows builds? |

|

@ngimel updated and ready to merge. |

facebook-github-bot

left a comment

facebook-github-bot

left a comment

There was a problem hiding this comment.

@ngimel has imported this pull request. If you are a Facebook employee, you can view this diff on Phabricator.

1 similar comment

…ction methods. (pytorch#40962) Summary: Follow up to pytorch#36447 . Update for pytorch#33389. Also removes unused `unordered_map` include from the CPP file. Pull Request resolved: pytorch#40962 Differential Revision: D22376253 Pulled By: ngimel fbshipit-source-id: 4e7432190e9a847321aec6d6f6634056fa69bdb8

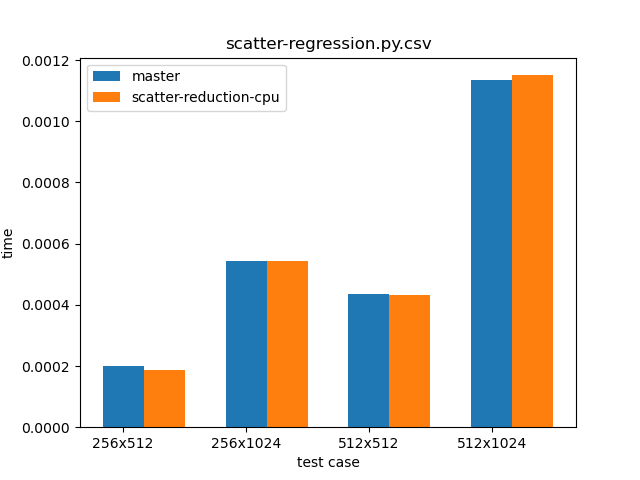

Summary: This PR implements pytorchgh-33389. As a result of this PR, users can now specify various reduction modes for scatter operations. Currently, `add`, `subtract`, `multiply` and `divide` have been implemented, and adding new ones is not hard. While we now allow dynamic runtime selection of reduction modes, the performance is the same as as was the case for the `scatter_add_` method in the master branch. Proof can be seen in the graph below, which compares `scatter_add_` in the master branch (blue) and `scatter_(reduce="add")` from this PR (orange).  The script used for benchmarking is as follows: ``` python import os import sys import torch import time import numpy from IPython import get_ipython Ms=256 Ns=512 dim = 0 top_power = 2 ipython = get_ipython() plot_name = os.path.basename(__file__) branch = sys.argv[1] fname = open(plot_name + ".csv", "a+") for pM in range(top_power): M = Ms * (2 ** pM) for pN in range(top_power): N = Ns * (2 ** pN) input_one = torch.rand(M, N) index = torch.tensor(numpy.random.randint(0, M, (M, N))) res = torch.randn(M, N) test_case = f"{M}x{N}" print(test_case) tobj = ipython.magic("timeit -o res.scatter_(dim, index, input_one, reduce=\"add\")") fname.write(f"{test_case},{branch},{tobj.average},{tobj.stdev}\n") fname.close() ``` Additionally, one can see that various reduction modes take almost the same time to execute: ``` op: add 70.6 µs ± 27.3 ns per loop (mean ± std. dev. of 7 runs, 10000 loops each) 26.1 µs ± 26.5 ns per loop (mean ± std. dev. of 7 runs, 10000 loops each) op: subtract 71 µs ± 20.5 ns per loop (mean ± std. dev. of 7 runs, 10000 loops each) 26.4 µs ± 34.4 ns per loop (mean ± std. dev. of 7 runs, 10000 loops each) op: multiply 70.9 µs ± 31.5 ns per loop (mean ± std. dev. of 7 runs, 10000 loops each) 27.4 µs ± 29.3 ns per loop (mean ± std. dev. of 7 runs, 10000 loops each) op: divide 164 µs ± 48.8 ns per loop (mean ± std. dev. of 7 runs, 10000 loops each) 52.3 µs ± 132 ns per loop (mean ± std. dev. of 7 runs, 10000 loops each) ``` Script: ``` python import torch import time import numpy from IPython import get_ipython ipython = get_ipython() nrows = 3000 ncols = 10000 dims = [nrows, ncols] res = torch.randint(5, 10, dims) idx1 = torch.randint(dims[0], (1, dims[1])).long() src1 = torch.randint(5, 10, (1, dims[1])) idx2 = torch.randint(dims[1], (dims[0], 1)).long() src2 = torch.randint(5, 10, (dims[0], 1)) for op in ["add", "subtract", "multiply", "divide"]: print(f"op: {op}") ipython.magic("timeit res.scatter_(0, idx1, src1, reduce=op)") ipython.magic("timeit res.scatter_(1, idx2, src2, reduce=op)") ``` Pull Request resolved: pytorch#36447 Differential Revision: D22272631 Pulled By: ngimel fbshipit-source-id: 3cdb46510f9bb0e135a5c03d6d4aa5de9402ee90

…ction methods. (pytorch#40962) Summary: Follow up to pytorch#36447 . Update for pytorch#33389. Also removes unused `unordered_map` include from the CPP file. Pull Request resolved: pytorch#40962 Differential Revision: D22376253 Pulled By: ngimel fbshipit-source-id: 4e7432190e9a847321aec6d6f6634056fa69bdb8

This PR implements gh-33389.

As a result of this PR, users can now specify various reduction modes for scatter operations. Currently,

add,subtract,multiplyanddividehave been implemented, and adding new ones is not hard.While we now allow dynamic runtime selection of reduction modes, the performance is the same as as was the case for the

scatter_add_method in the master branch. Proof can be seen in the graph below, which comparesscatter_add_in the master branch (blue) andscatter_(reduce="add")from this PR (orange).The script used for benchmarking is as follows:

Additionally, one can see that various reduction modes take almost the same time to execute:

Script: