kv: finish and enable parallel commits#37719

Conversation

tbg

left a comment

tbg

left a comment

There was a problem hiding this comment.

Can you run the tests a few times to weed out any obvious flakes?

Reviewed 3 of 3 files at r1, 9 of 9 files at r2, 1 of 1 files at r3.

Reviewable status:complete! 1 of 0 LGTMs obtained (waiting on @nvanbenschoten)

pkg/kv/dist_sender.go, line 809 at r2 (raw file):

// issued concurrently with the rest of the parallel commit batch. func (ds *DistSender) divideAndSendParallelCommit( ctx context.Context, ba roachpb.BatchRequest, rs roachpb.RSpan, batchIdx int,

Explain batchIdx.

pkg/kv/dist_sender.go, line 838 at r2 (raw file):

Isn't it more specifically

this method if they end up in the same range as the EndTransaction (or if the batch rewrites previously written keys)

pkg/kv/dist_sender.go, line 861 at r2 (raw file):

positions := make([]int, len(qiBa.Requests)) for i := swapIdx; i < lastIdx; i++ { positions[i-swapIdx] = i

Mind adding a comment (with a small example) here?

pkg/kv/dist_sender.go, line 863 at r2 (raw file):

// Send the batch with withCommit=true since it will be inflight concurrently with the EndTransaction batch below.

pkg/kv/dist_sender.go, line 901 at r2 (raw file):

qiPErr.UpdateTxn(ba.Txn) maybeSwapErrorIndex(qiPErr, swapIdx, lastIdx) pErr := roachpb.NewError(&roachpb.MixedSuccessError{Wrapped: qiPErr})

I thought we wanted to get rid of MixedSuccessError. Still the plan and how will the error be handled today (after this PR)?

pkg/kv/dist_sender_server_test.go, line 2481 at r2 (raw file):

a mixed

pkg/kv/dist_sender_test.go, line 2100 at r2 (raw file):

et: roachpb.Key("b"), parCommit: true, exp: [][]roachpb.Method{{roachpb.Put, roachpb.Put}, {roachpb.EndTransaction}},

Can you tell from this that it was sent in parallel? I can't. Shouldn't I be able to tell?

pkg/kv/dist_sender_test.go, line 2140 at r2 (raw file):

et: roachpb.Key("a1"), parCommit: true, exp: [][]roachpb.Method{{roachpb.EndTransaction}, {roachpb.Put, roachpb.Put}},

ditto. Maybe this is ok?

pkg/kv/txn_interceptor_committer.go, line 159 at r1 (raw file):

// flag based on whether any of previously declared in-flight writes // in this batch overlap with each other. This will have (rare) false // negatives when the in-flight writes overlap with existing intent

I know this is just a revert, but if someone went and disabled parallel commits via the cluster setting, don't they need this removed code?

This reverts commit 9d874cc. Release note: None

…tion This is the last commit needed for parallel commits to "work"! If a batch is performing a parallel commit and the transaction has previously pipelined writes that have yet to be proven successful then the EndTransaction request will be preceded by a series of QueryIntent requests (see txn_pipeliner.go). Before evaluating, each of these QueryIntent requests will grab latches and wait for their corresponding write to finish. This is how the QueryIntent requests synchronize with the write they are trying to verify. If these QueryIntents remained in the same batch as the EndTransaction request then they would force the EndTransaction request to wait for the previous write before evaluating itself. This "pipeline stall" would effectively negate the benefit of the parallel commit. To avoid this, we make sure that these "pre-commit" QueryIntent requests are split from and issued concurrently with the rest of the parallel commit batch. Release note: None

Release note: None

9f6c73d to

5e140b2

Compare

nvb

left a comment

nvb

left a comment

There was a problem hiding this comment.

TFTR!

Can you run the tests a few times to weed out any obvious flakes?

I stressed both of them. They look stable.

Reviewable status:

complete! 0 of 0 LGTMs obtained (and 1 stale) (waiting on @tbg)

pkg/kv/dist_sender.go, line 809 at r2 (raw file):

Previously, tbg (Tobias Grieger) wrote…

Explain

batchIdx.

Done.

pkg/kv/dist_sender.go, line 838 at r2 (raw file):

Previously, tbg (Tobias Grieger) wrote…

Isn't it more specifically

this method if they end up in the same range as the EndTransaction (or if the batch rewrites previously written keys)

Well these are only ever present if the batch is rewriting previously written keys, which is why they are rare. Them ending on the same range as the EndTransaction doesn't really matter - either way, some write is being slowed down.

I added a note that points to txnPipeliner.chainToInFlightWrites.

pkg/kv/dist_sender.go, line 861 at r2 (raw file):

Previously, tbg (Tobias Grieger) wrote…

Mind adding a comment (with a small example) here?

Done.

pkg/kv/dist_sender.go, line 863 at r2 (raw file):

Previously, tbg (Tobias Grieger) wrote…

// Send the batch with withCommit=true since it will be inflight concurrently with the EndTransaction batch below.

Done.

pkg/kv/dist_sender.go, line 901 at r2 (raw file):

Previously, tbg (Tobias Grieger) wrote…

I thought we wanted to get rid of

MixedSuccessError. Still the plan and how will the error be handled today (after this PR)?

Yes, that's still the plan and nothing we did with parallel commits should cause issues (e.g. staging a transaction record is idempotent). For now, though, we can't get rid of it. This MixedSuccessError will be handled exactly like any other after this PR.

pkg/kv/dist_sender_server_test.go, line 2481 at r2 (raw file):

Previously, tbg (Tobias Grieger) wrote…

a mixed

Done.

pkg/kv/dist_sender_test.go, line 2100 at r2 (raw file):

Previously, tbg (Tobias Grieger) wrote…

Can you tell from this that it was sent in parallel? I can't. Shouldn't I be able to tell?

No, the goal wasn't to be able to tell here. Added a comment.

pkg/kv/dist_sender_test.go, line 2140 at r2 (raw file):

Previously, tbg (Tobias Grieger) wrote…

ditto. Maybe this is ok?

In this case, we can actually tell. Added a comment.

pkg/kv/txn_interceptor_committer.go, line 159 at r1 (raw file):

Previously, tbg (Tobias Grieger) wrote…

I know this is just a revert, but if someone went and disabled parallel commits via the cluster setting, don't they need this removed code?

Good point, done.

tbg

left a comment

tbg

left a comment

There was a problem hiding this comment.

Reviewed 11 of 11 files at r4, 9 of 9 files at r5, 1 of 1 files at r6.

Reviewable status:complete! 1 of 0 LGTMs obtained

|

bors r+ |

|

Bors failed because of #36812. bors r+ |

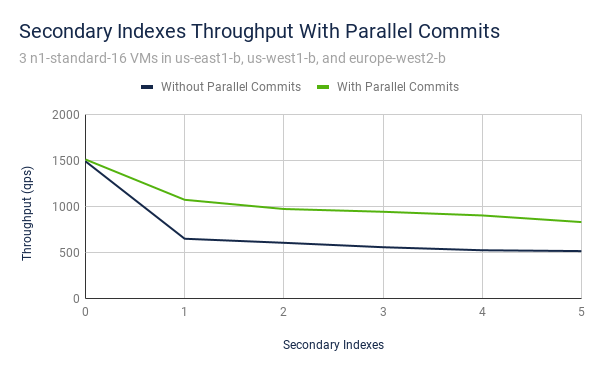

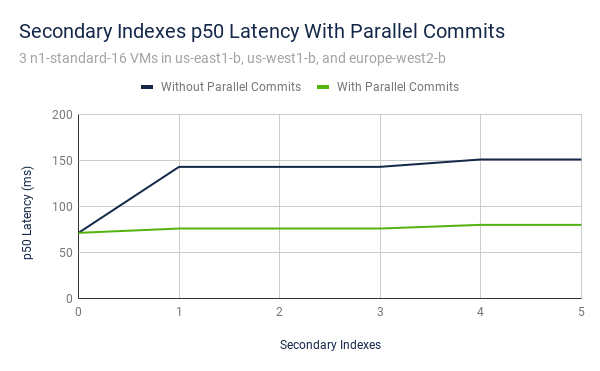

37719: kv: finish and enable parallel commits r=nvanbenschoten a=nvanbenschoten The majority of this PR is addressing the final work item for parallel commits - splitting pre-commit QueryIntents from parallel committing EndTransaction requests. If a batch is performing a parallel commit and the transaction has previously pipelined writes that have yet to be proven successful then the EndTransaction request will be preceded by a series of QueryIntent requests (see txn_pipeliner.go). Before evaluating, each of these QueryIntent requests will grab latches and wait for their corresponding write to finish. This is how the QueryIntent requests synchronize with the write they are trying to verify. If these QueryIntents remained in the same batch as the EndTransaction request then they would force the EndTransaction request to wait for the previous write before evaluating itself. This "pipeline stall" would effectively negate the benefit of the parallel commit. To avoid this, we make sure that these "pre-commit" QueryIntent requests are split from and issued concurrently with the rest of the parallel commit batch. With this complete, parallel commits is then able to be enabled. ### Benchmarks One of my recent experiments was to run the `indexes` benchmark at varying numbers of secondary indexes on a 3-node geo-distributed cluster. Below are plots with and without parallel commits enabled. We can see that without parallel commits, the introduction of the first secondary index doubles p50 latency and reduces throughput (at a fixed concurrency) by 56%. With parallel commits, the introduction of the secondary index has almost no noticeable effect on p50 latency and reduces throughput by only 29%.   I expect I'll polish off more comparison benchmarks over the next few weeks to further demonstrate the effect of parallel commits. 37726: roachtest: fix `fixtures import` on release branches r=tbg,nvanbenschoten a=danhhz In #36855, we bumped the workload-version of tpcc from 2.0.1 to 2.1.0. The IMPORT commands generated by `fixtures import` include the version built in so that all the nodes processing the IMPORT (which internally synthesize the data) can check against their internal version. This prevents nodes from generating incompatible data, but an unfortunate side effect of this is that the tpcc version in the workload binary must match the one in the cockroach nodes. This works for roachtests in master, but not for release branches, which use a workload binary built from master. Instead, use the `cockroach workload fixtures import` subcommand, which is guaranteed to match. Release note: None Co-authored-by: Nathan VanBenschoten <nvanbenschoten@gmail.com> Co-authored-by: Daniel Harrison <daniel.harrison@gmail.com>

Build succeeded |

The majority of this PR is addressing the final work item for parallel commits - splitting pre-commit QueryIntents from parallel committing EndTransaction requests.

If a batch is performing a parallel commit and the transaction has previously pipelined writes that have yet to be proven successful then the EndTransaction request will be preceded by a series of QueryIntent requests (see txn_pipeliner.go). Before evaluating, each of these QueryIntent requests will grab latches and wait for their corresponding write to finish. This is how the QueryIntent requests synchronize with the write they are trying to verify.

If these QueryIntents remained in the same batch as the EndTransaction request then they would force the EndTransaction request to wait for the previous write before evaluating itself. This "pipeline stall" would effectively negate the benefit of the parallel commit. To avoid this, we make sure that these "pre-commit" QueryIntent requests are split from and issued concurrently with the rest of the parallel commit batch.

With this complete, parallel commits is then able to be enabled.

Benchmarks

The original benchmarking for parallel commits was presented in #35165 (comment).

One of my recent experiments was to run the

indexesbenchmark at varying numbers of secondary indexes on a 3-node geo-distributed cluster. Below are plots with and without parallel commits enabled. We can see that without parallel commits, the introduction of the first secondary index doubles p50 latency and reduces throughput (at a fixed concurrency) by 56%. With parallel commits, the introduction of the secondary index has almost no noticeable effect on p50 latency and reduces throughput by only 29%.I expect I'll polish off more comparison benchmarks over the next few weeks to further demonstrate the effect of parallel commits.