server : support audio input#13714

Conversation

|

LGTM! |

|

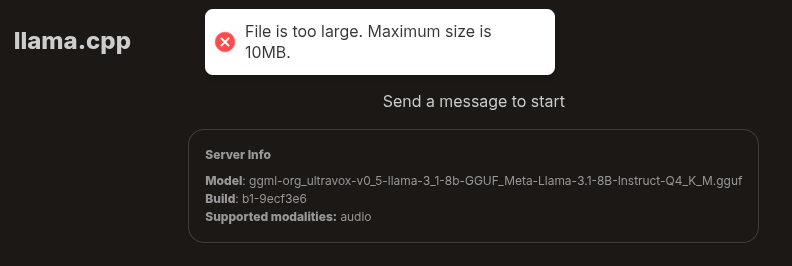

I compiled the server myself and it started without problem although when I tried to upload a 35 minute mp3 I got this error |

|

@AeneasZhu can you try --no-mmproj-offload to see it if works? It will run the audio encoder on cpu. Also add --verbose to give more verbose log @kth8 I'm doubt that model is trained with audio that long, but we can remove the 10MB restriction on frontend if needed |

|

awesome - is there a way to use this to transcribe a live stream of audio or does it have to be a complete pre-made audio file? |

|

These models are text-audio-to-text, not ASR, so I don't think they are trained or optimized for streamed real-time transcription |

oh, what is the practical limit of this model? The model card didn't mention any best practices. Does it work best if I limit the audio length to ~5 mins like in your example? |

I have exactly the same problem with the same GPU. I created a new issue. |

|

@kth8 there is no clear limit for the model. The 10MB limit is frontend-only, it's there becasue we don't want user to accidentally upload multi-gigabytes file which will crash the web page. But we can remove it anyway |

|

@ngxson have been following you for a while from your smol-vlm release. What does it take to support Qwen2-Audio Instruct ? it seems to have similar interface like above model. default huggingface-python is too slow for my usecase. I wanted to understand and contribute on llama.cpp by taking this on. Just an open source lover wanting to help !! |

Cont #13623

Pre-quantized models (recommended 8B model, it has much better quality than the 1B):

Try via web UI, summarize this fireship video

OAI-compat API: