Topic modelling is an unsupervised machine learning method that helps us discover hidden semantic structures in a paper, that allows us to learn topic representations of papers in a corpus. The model can be applied to any kinds of labels on documents, such as tags on posts on the website.

Ещё можно называть мягкой би-кластеризацией

- Topic Modeling on Papers with Code

- Topic Modeling on StackOverflow

- Unsupervised

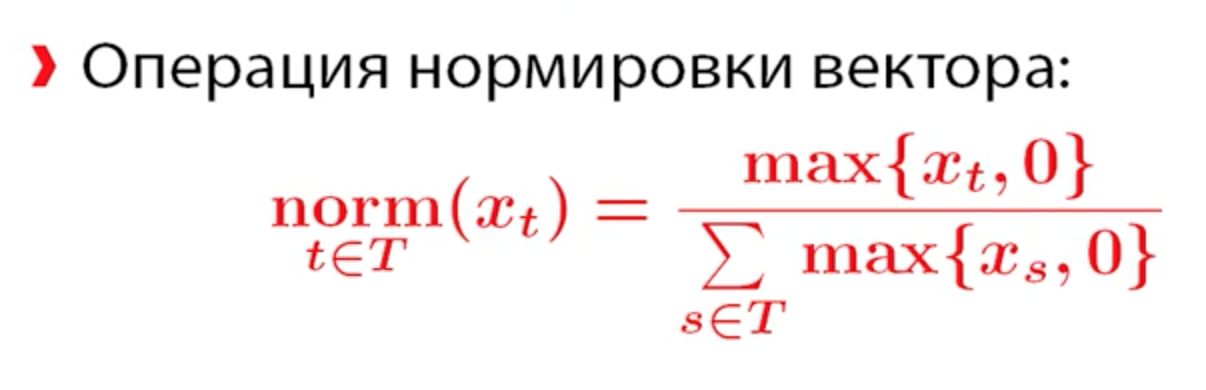

- Latent Dirichlet Allocation (LDA)

- Expectation–maximization algorithm (EM Algorithm)

- Probabilistic latent semantic analysis (PLSA)

- LDA2Vec

- Sentence-BERT (SBERT)

- Sepervised or semi-supervised

- Guided Latent Dirichlet Allocation (Guided LDA)

- Anchored CorEx: Hierarchical Topic Modeling with Minimal Domain Knowledge (CorEx)

- BERTopic

Dataset for this task:

- A Million News Headlines - News headlines published over a period of 18 Years

- Latent Dirichlet Allocation (LDA) and Topic modeling: models, applications, a survey

- Bag of Tricks for Efficient Text Classification

- Incorporating Lexical Priors into Topic Models

- My First Foray into Data Science: Semi-Supervised Topic Modeling

- Some key points:

- Taking 100 records from each topic to create an evenly distributed training data set increased my accuracy by at least 10%.

- Here are a few other hyperparameters to tune (alpha, beta, etc.), and I can always gather a larger quasi-stratified sample to throw at the model (keeping in mind that I can’t be sure exactly how evenly distributed it is).

- Some other ideas that have cropped up are leveraging a concept ontology (or word embedding) to enhance the depth of my seed words, synthetically duplicating the curated documents to increase the size of the test set (to make a training set for supervised learning), or applying transfer learning from a large, external corpus and hope that the topics align with the internal business topics. And, of course, there’s the world of deep learning.

- Other things I plan to try with my data set (other than continue to lobby for more, better data) are hierarchical agglomerative clustering, multiple individual binary classifiers, and a series of hierarchical classifiers (we have learned that certain topics are linked to certain countries, which we have been able to tag with >90% accuracy in these same documents). The most promising seems to be using business knowledge to narrow down the number of possible topics (from 26 to 10 or 11) and then attempt the classification.

- So each document was tagged (albeit potentially incorrectly) with multiple tags. It ranged from 2 to 10 tags per document. When I was attempting my stratified sampling (100 records per topic), I selected documents that had only 2 tags. My assumption here was that if a document only had two topics, it was going to be more specific for the topic. Using that logic, I selected 100 records per topic, where each document had two topics. I also ensured that I was doing sampling without replacement, so there was no possibility that the model was learning the same subset of frequent terms for different topics. For example, I can talk at a high level about science and politics and sports, but if I’m only talking about science, then I’m more likely to use topic-specific words more frequently. This would help bump the relative frequency of topic-specific terms, helping my model learn more clearly.

- Some key points:

- Topic Modeling with Gensim (Python)

- Topic Modeling with LSA, PLSA, LDA & Lda2Vec

- Topic Modeling with Gensim (Python)

- Topic Modeling in Python

- Topic modeling visualization – How to present the results of LDA models?

- 2 latent methods for dimension reduction and topic modeling

- Latent Semantic Analysis (LSA)

- Latent Dirichlet Allocation (LDA)

- Topic Modelling using Word Embeddings and Latent Dirichlet Allocation

- Clustering using ‘wordtovec’ embeddings

- Clustering using LDA ( Latent Dirichlet Analysis)

- Latent Dirichlet Allocation for Beginners: A high level intuition

- Topic modeling made just simple enough.

- How Stuff Works: A Comprehensive Topic Modelling Guide with NMF, LSA, PLSA, LDA & lda2vec (Part-2)

- Topic Modeling with Latent Dirichlet Allocation

- классификация и категоризация документов

- тематическая сегментация документов

- атоматическое аннотирование документов

- автоматическая суммаризация коллекций

- удаление форматирования и переносов

- удаление обрывочной и нетекстовой информации

- исправление опечаток

- сливание коротких текстов

- Формирование словаря:

- Лемматизация

- Стемминг

- Выделение терминов (term extraction)

- Удаление stopwords и слишком редких слов (реже 10 раз)

- Использовать биграммы

- Порядок слов в документе не важен (bag of words)

- Каждая пара (d, w) связана с некторой темой t ∈ T

- Гипотеза условной независимости (cлова в документах генерируются темой, а не документом): p(w | t, d) = p(w | t)

- Иногда вводят предположение о разрежености:

- Документ относится к небольшому числу тем

- Тема состоит из небольшого числа терминов

- Документ d -- это смесь распределений p(w | t) с весами p(t | d)

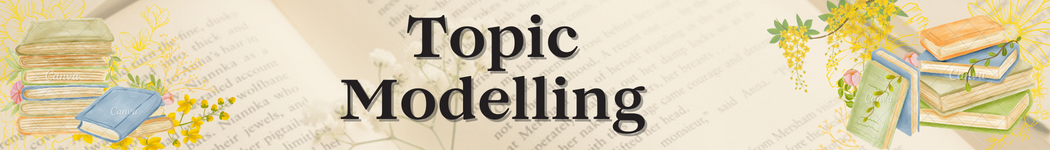

Дано:

- W - словарь терминов (слов или словосочетаний)

- D - коллекция текстовых документов d ⊂ W

- n dw - сколько раз термин (слово) w встретится в документе d

- n d - длина документа d

Найти: Параметры вероятностной тематической модели (формула полной вероятности):

Условные распределения:

- φw t = p(w | t) - вероятности терминов w в каждой теме t

- Θt d = p(t | d) - вероятности тем t в каждом документе d

Стохастическая матрица - столбцы дискретные вероятностные распределения

- В Φ неотрицательные нормарованные значения, сумма по каждому столбцу = 1, столбец - дискретное распределение вероятностей

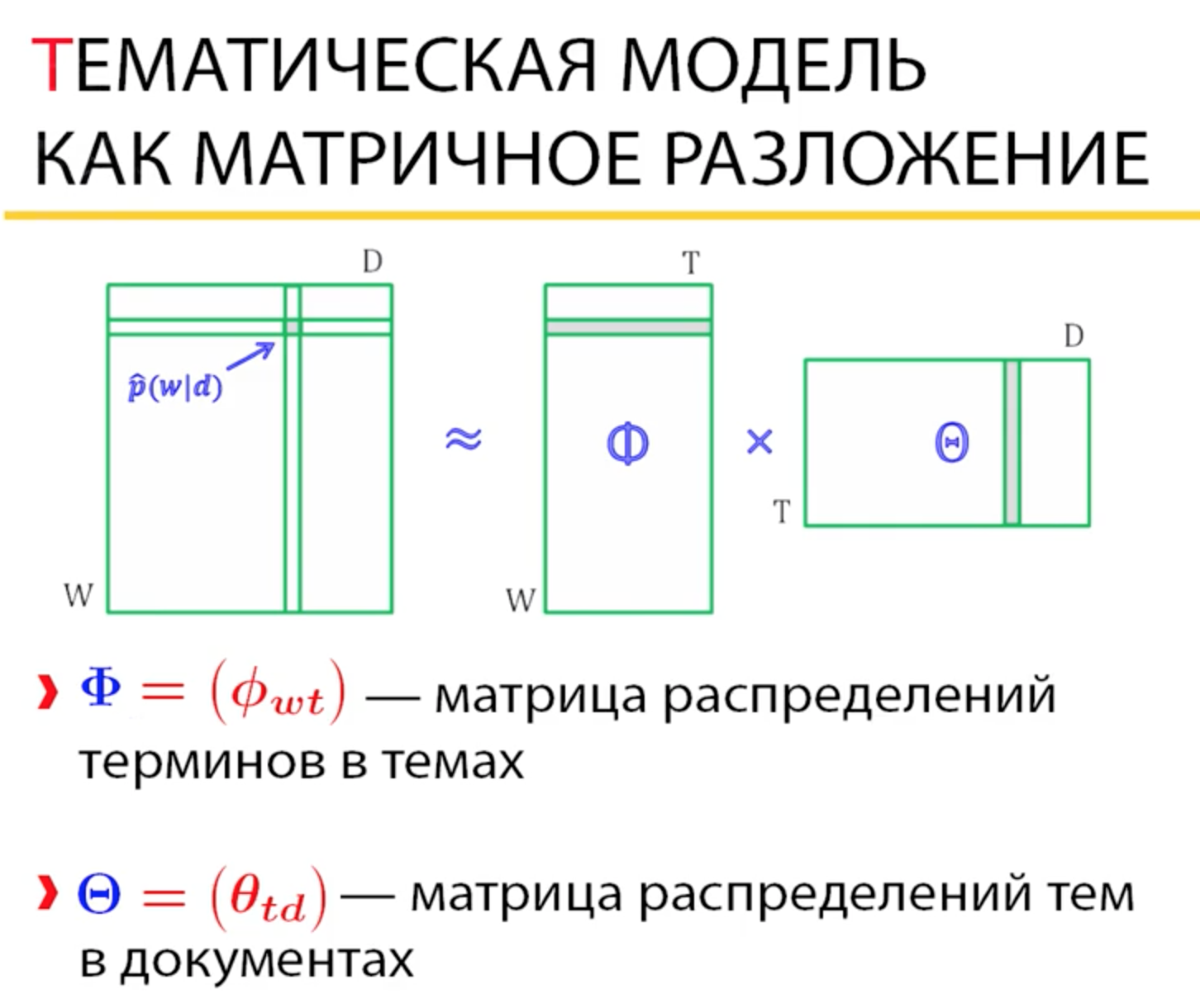

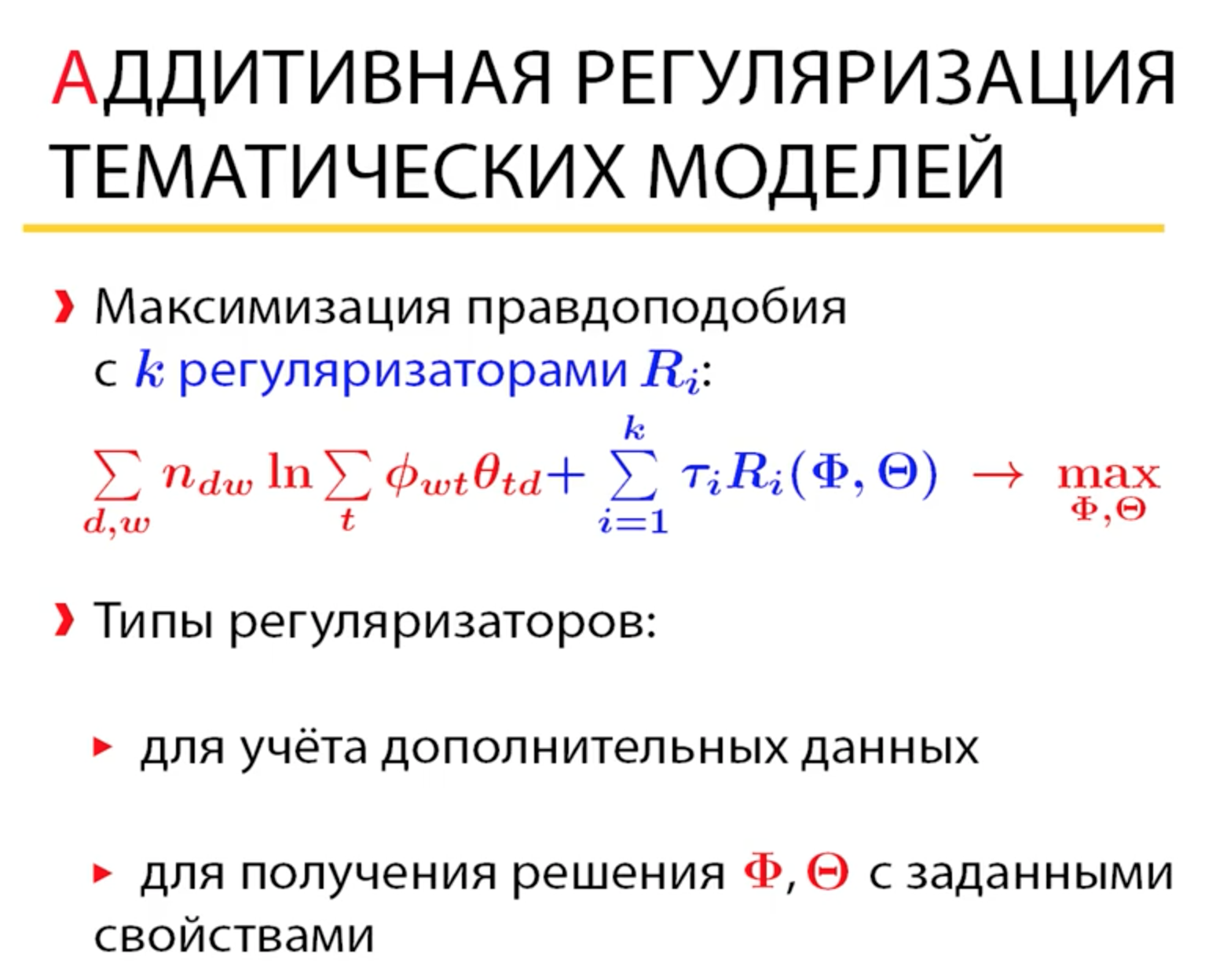

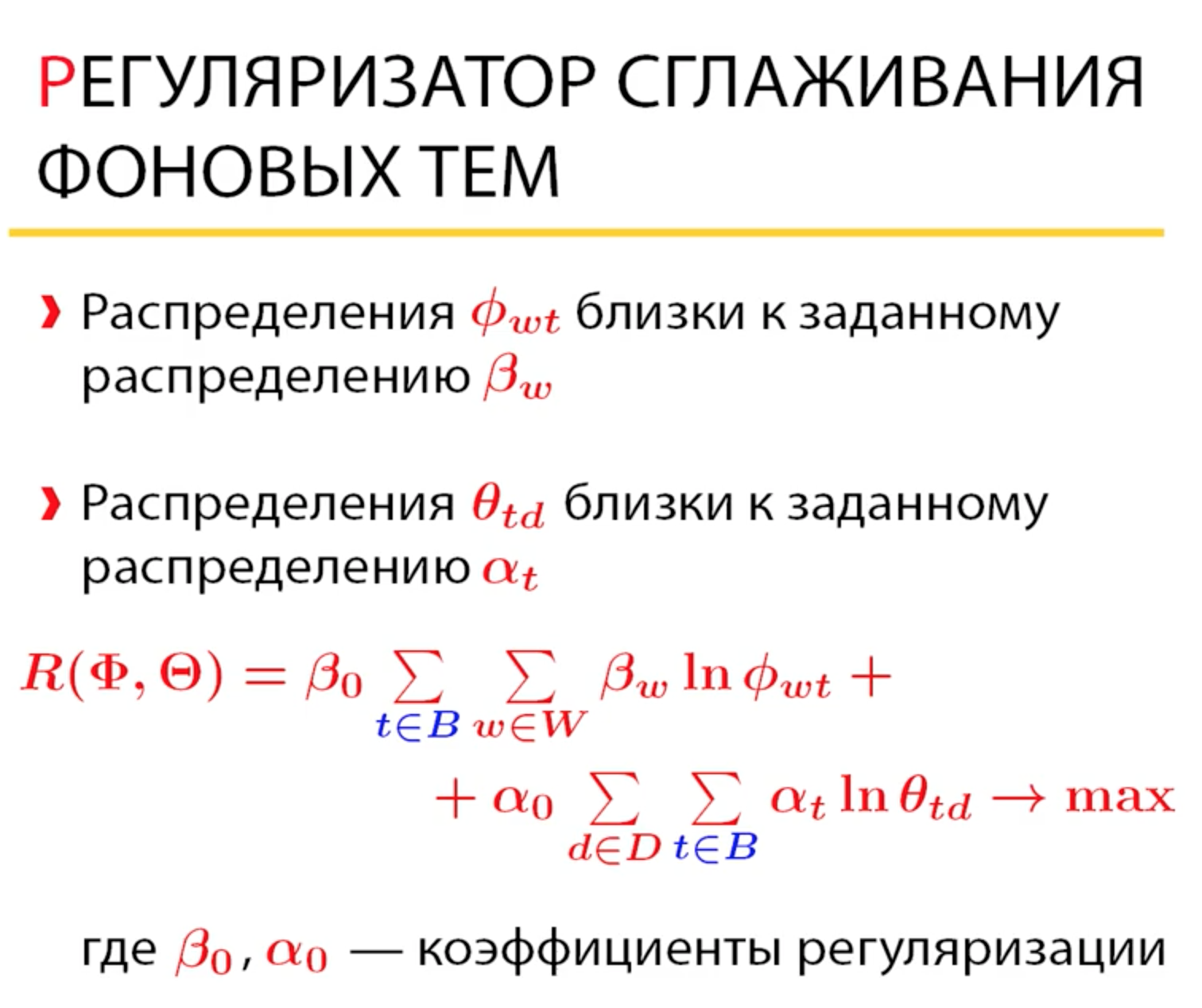

Решение: Использование регуляризаторов

- Generative model

- Topic Modelling in Python with NLTK and Gensim

- Topic Modeling and Latent Dirichlet Allocation (LDA) in Python

- Topic Modelling in Python with NLTK and Gensim

- Using LDA Topic Models as a Classification Model Input

- We pick the number of topics ahead of time even if we’re not sure what the topics are.

- Each document is represented as a distribution over topics.

- Each topic is represented as a distribution over words.

- Topic concentration / Beta

- Document concentration / Alpha

For the symmetric distribution, a high alpha-value means that each document is likely to contain a mixture of most of the topics, and not any single topic specifically. A low alpha value puts less such constraints on documents and means that it is more likely that a document may contain mixture of just a few, or even only one, of the topics. Likewise, a high beta-value means that each topic is likely to contain a mixture of most of the words, and not any word specifically, while a low value means that a topic may contain a mixture of just a few of the words.

If, on the other hand, the distribution is asymmetric, a high alpha-value means that a specific topic distribution (depending on the base measure) is more likely for each document. Similarly, high beta-values means each topic is more likely to contain a specific word mix defined by the base measure.

In practice, a high alpha-value will lead to documents being more similar in terms of what topics they contain. A high beta-value will similarly lead to topics being more similar in terms of what words they contain.

GuidedLDA OR SeededLDA implements latent Dirichlet allocation (LDA) using collapsed Gibbs sampling. GuidedLDA can be guided by setting some seed words per topic. Which will make the topics converge in that direction.

- Incorporating Lexical Priors into Topic Models Paper

- GuidedLDA’s documentation

- GuidedLDA GitHub repository

- How we Changed Unsupervised LDA to Semi-Supervised GuidedLDA Article

- How did I tackle a real-world problem with GuidedLDA?

- Guided LDA_6topics-4-grams Code Exapmle

- Labeled LDA + Guided LDA topic modelling

Solution:

Running on OS X 10.14 w/Python3.7. able to get past them by upgrading cython:

pip3 install -U cython

Removing the following 2 lines from setup.cfg:

[sdist]

pre-hook.sdist_pre_hook = guidedlda._setup_hooks.sdist_pre_hook

And then running the original installation instructions:

git clone https://github.com/vi3k6i5/GuidedLDA

cd GuidedLDA

sh build_dist.sh

python setup.py sdist

pip3 install -e .

- The package

GuidedLDAdoesn't installed

Solution:

The package that this is built of off is LDA and it installed with no issue. I managed to copy from the GuidedLDA package: the guidedlda.py, utils.py, datasets.py and the few NYT data set items into the original LDA package, post installation.

-

Pull down the repository.

-

Install the original LDA package. https://pypi.org/project/lda/

-

Drop the *.py files from the GuidedLDA_WorkAround repo in the lda folder under site-packages for your specific enviroment.

-

Profit...

Correlation Explanation (CorEx) is a topic model that yields rich topics that are maximally informative about a set of documents. The advantage of using CorEx versus other topic models is that it can be easily run as an unsupervised, semi-supervised, or hierarchical topic model depending on a user's needs. For semi-supervision, CorEx allows a user to integrate their domain knowledge via "anchor words". This integration is flexible and allows the user to guide the topic model in the direction of those words. This allows for creative strategies that promote topic representation, separability, and aspects. More generally, this implementation of CorEx is good for clustering any sparse binary data.

- Paper: Gallagher, R. J., Reing, K., Kale, D., and Ver Steeg, G. "Anchored Correlation Explanation: Topic Modeling with Minimal Domain Knowledge". Transactions of the Association for Computational Linguistics (TACL), 2017.

- GitHub repository

- Example Notebook

- PyPI page for CorEx

- Anchored Correlation Explanation: Topic Modeling With Minimal Domain Knowledge (TACL) : Ryan J. Gallagher, Kyle Reing, David Kal at ACL Performance

- Bio CorEx: recover latent factors with Correlation Explanation (CorEx)

- Correlation Explanation Methods

- Linear CorEx

- T-CorEx

- Latent Factor Models Based on Linear Total Correlation Explanation (CorEx)

- Update original model with the new data → gregversteeg/corex_topic#31

- Test the model on new data → gregversteeg/corex_topic#24

- How change the model, in particular, recalculation of probability estimates document-topic → gregversteeg/corex_topic#33

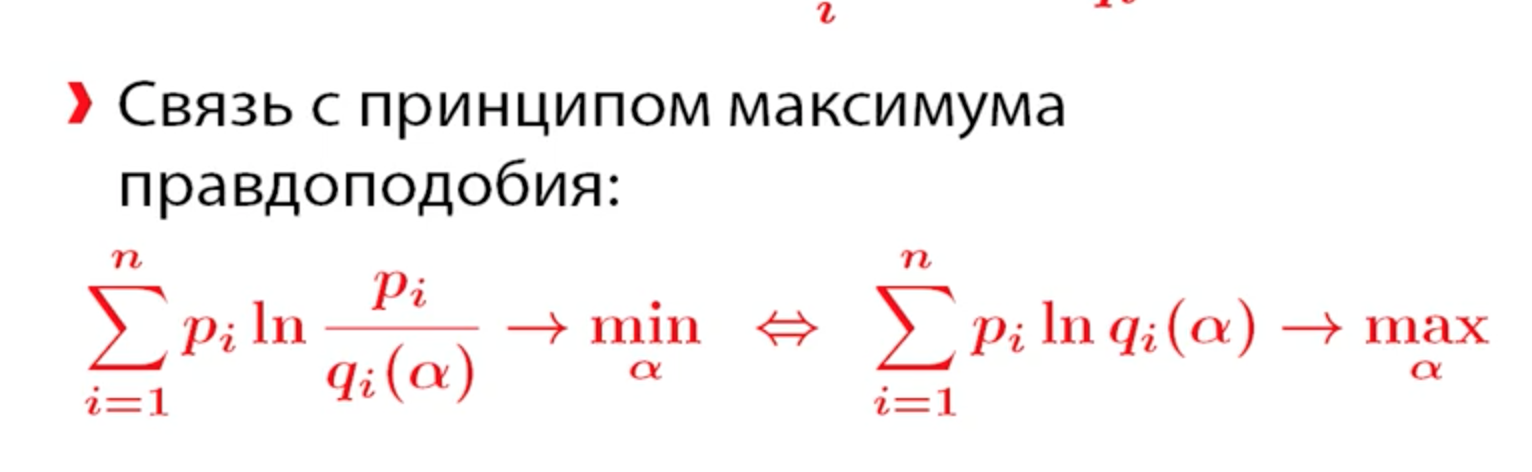

Способ померять растояние между двумя распределениями

- tomotopy

-

tomotopy is a Python extension of tomoto (Topic Modeling Tool) which is a Gibbs-sampling based topic model library written in C++. It utilizes a vectorization of modern CPUs for maximizing speed. The current version of tomoto supports several major topic models

- tomotopy API documentation (v0.11.1)

-

- BigARTM

-

BigARTM is a powerful tool for topic modeling based on a novel technique called Additive Regularization of Topic Models. This technique effectively builds multi-objective models by adding the weighted sums of regularizers to the optimization criterion. BigARTM is known to combine well very different objectives, including sparsing, smoothing, topics decorrelation and many others. Such combination of regularizers significantly improves several quality measures at once almost without any loss of the perplexity.

- BigARTM Documentation

- GitHub

- Пример использования библиотеки BigARTM для построения тематической модели

- Hierarchical topic modeling with BigARTM library

-

- Stochastic block model

- A network approach to topic models

- Bayesian Core-Periphery Stochastic Block Models

-

Core-periphery structure is one of the most ubiquitous mesoscale patterns in networks. This code implements two Bayesian stochastic block models in Python for modeling hub-and-spoke and layered core-periphery structures. It can be used for probabilistic inference of core-periphery structure and model selection between the two core-periphery characterizations.

- GitHub

-

- TopSBM: Topic Models based on Stochastic Block Models

- hSBM_Topicmodel

-

A tutorial for topic-modeling with hierarchical stochastic blockmodels using graph-tool.

-

- A scikit-learn extension for Topic Models based on Stochastic Block Models

- Neural Topic Model

- LDA2vec: Word Embeddings in Topic Models

- Introducing our Hybrid lda2vec Algorithm

- lda2vec: Tools for interpreting natural language

- lda2vec-tf

-

TensorFlowimplementation of Christopher Moody's lda2vec, a hybrid of Latent Dirichlet Allocation & word2vec

-

- lda2vec

-

pytorchimplementation of Moody's lda2vec, a way of topic modeling using word embeddings. The original paper: Mixing Dirichlet Topic Models and Word Embeddings to Make lda2vec.

-

- lda2vec – flexible & interpretable NLP models

-

An overview of the lda2vec Python module can be found here.

-

- https://nbviewer.jupyter.org/github/cemoody/lda2vec/blob/master/examples/twenty_newsgroups/lda2vec/lda2vec.ipynb

- Bayesian topic modeling

- LDA in Python – How to grid search best topic models?

-

Python’s Scikit Learn provides a convenient interface for topic modeling using algorithms like Latent Dirichlet allocation(LDA), LSI and Non-Negative Matrix Factorization. In this tutorial, you will learn how to build the best possible LDA topic model and explore how to showcase the outputs as meaningful results.

-

- Topic Modeling with BERT

-

Topic modelling on the other hand focuses on categorising texts into particular topics. For this task, it is arguably arbitrary to use a language model since topic modelling focuses more on categorisation of texts, rather than the fluency of those texts. Thinking about it though, as well as the suggest given above, you could also develop separate language models, where for example, one is trained on texts within topic A, another in topic B, etc. Then you could categorise texts by outputting a probability distribution over topics. So, in this case, you might be able to do to transfer learning, whereby you take the pre-trained BERT model, add any additional layers, including a final output softmax layer, which produces the probability distribution over topics. To re-train the model, you essentially freeze the parameters within the BERT model itself and only train the additional layers you added

- Topic Modeling with BERT

- BERTopic

-

BERTopic is a topic modeling technique that leverages 🤗 transformers and c-TF-IDF to create dense clusters allowing for easily interpretable topics whilst keeping important words in the topic descriptions.

-

-

- Short Text Topic Modeling

- Gibbs Sampling Dirichlet Mixture Model (GSDMM)

- A dirichlet multinomial mixture model-based approach for short text clustering

- Short Text Topic Modeling Techniques, Applications, and Performance: A Survey

- Probabilistic Topic Models: Expectation-Maximization Algorithm

- kNN

- pyLDAvis

-

Python library for interactive topic model visualization.

-