- Udacity:

- Intro to Descriptive Statistics (Mathematics for Understanding Data) by Kaggle

- Intro to Inferential Statistics (Making Predictions from Data) by Facebook Blueprint

- Statistics Playlist 1 by Professor Leonard

- Statistics by The Organic Chemistry

| Title | Description, Information |

|---|---|

| Statistics/ Mathematical Computing Notebooks | General statistics, mathematical programming, and numerical/scientific computing scripts and notebooks in Python |

| Title | Description |

|---|---|

| TensorFlow Probability | TensorFlow Probability (TFP) is a Python library built on TensorFlow that makes it easy to combine probabilistic models and deep learning on modern hardware (TPU, GPU). It's for data scientists, statisticians, ML researchers, and practitioners who want to encode domain knowledge to understand data and make predictions. |

| Pyro | Deep Universal Probabilistic Programming |

| ArviZ: Exploratory analysis of Bayesian models | ArviZ is a Python package for exploratory analysis of Bayesian models. Includes functions for posterior analysis, data storage, sample diagnostics, model checking, and comparison. |

| PyStan | PyStan is a Python interface to Stan, a package for Bayesian inference. |

Keep in mind that there is no silver bullet, no single best error metric. The fundamental challenge is, that every statistical measure condenses a large number of data into a single value, so it only provides one projection of the model errors emphasizing a certain aspect of the error characteristics of the model performance (Chai and Draxler 2014).

Therefore it is better to have a more practical and pragmatic view and work with a selection of metrics that fit for your use case or project.

| Title | Description, Information |

|---|---|

| Standard deviation |

|

| Coefficient of determination, R squared -> R2 |

|

| Standard Error |

|

| Relative Standard Deviation (RSD) / Coefficient of Variation (CV) |

|

| Relative Squared Error (RSE) |

|

| Approximation error |

|

| Title | Description, Information |

|---|---|

| Mean Absolute Error, MAE |

|

| Mean Squared Error (MSE) or Mean Squared Deviation (MSD) |

|

| Root-Mean-Square Deviation (RMSD) or Root-Mean-Square error (RMSE) |

Variations: |

| Mean Squared Prediction Error (MSPE) or Mean Squared Error of the Predictions (MSEP) |

|

| Title | Description, Information |

|---|---|

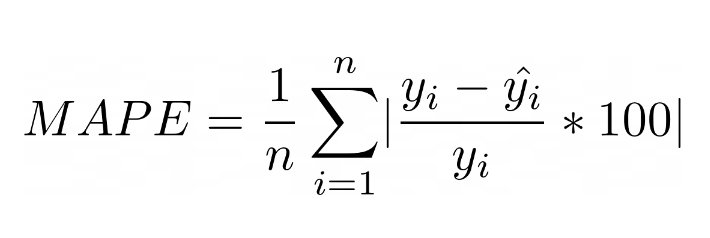

| Mean Absolute Percentage Error (MAPE) |

|

| Symmetric Mean Absolute Percentage Error (sMAPE) |

|

| Weighted Mean Absolute Percentage Error (wMAPE) |

| Title | Description, Information |

|---|---|

| Relative Absolute Error (RAE) |

|

| Median Relative Absolute Error (MdRAE) |

|

| Geometric Mean Relative Absolute Error (GMRAE) |

|

| Title | Description, Information |

|---|---|

| Mean Absolute Scaled Error (MASE) |

|

| Title | Description, Information |

|---|---|

| Forecast Error (or Residual Forecast Error) | The forecast error is calculated as the expected value minus the predicted value. This is called the residual error of the prediction. |

| Mean Forecast Error (or Forecast Bias) | Mean forecast error is calculated as the average of the forecast error values. |

| Tracking signal | Monitors any forecasts that have been made in comparison with actuals, and warns when there are unexpected departures of the outcomes from the forecasts. |

| Compounding Error | Compounding error is when deviation of one feature, or the process used to measure that feature, directly affects the measurement of another feature. In the training process, there’s never a chance to see compounding errors. The model is trained to predict the next token based on a human-generated context. If it gets one token wrong by generating a bad distribution, the next token uses the “correct” human generated context independent of the last prediction. During generation it is forced to complete its own automatically-generated context, a setting it has not considered during training. |

Time Series Forecast Error Metrics

| Title | Description, Information |

|---|---|

| Mean Reciprocal Rank | The mean reciprocal rank is a statistic measure for evaluating any process that produces a list of possible responses to a sample of queries, ordered by probability of correctness. This metric can help distinguish between answers that were close to being right or far from being right (e.g., a score of 1 if the correct document is rank 1, a score of ½ if rank 2, a score of ⅓ if rank 3, etc.) |

- Ways to Evaluate Regression Models

- Choosing the correct error metric: MAPE vs. sMAPE

- Time Series Forecast Error Metrics You Should Know

- Another look at measures of forecast accuracy by Rob J Hyndman

Many popular metrics are referred to as scale-dependent (Hyndman, 2006). Scale-dependent means the error metrics are expressed in the units (i.e. Dollars, Inches, etc.) of the underlying data.

The main advantage of scale dependent metrics is that they are usually easy to calculate and interpret. However, they can not be used to compare different series, because of their scale dependency (Hyndman, 2006).

Please note here that Hyndman (2006) includes Mean Squared Error into a scale-dependent group (claiming that the error is “on the same scale as the data”). However, Mean Squared Error has a dimension of the squared scale/unit. To bring MSE to the data’s unit we need to take the square root which leads to another metric, the RMSE. (Shcherbakov et al., 2013)

The Mean Absolute Error (MAE) is calculated by taking the mean of the absolute differences between the actual values (also called y) and the predicted values (y_hat).

It is easy to understand (even for business users) and to compute. It is recommended for assessing accuracy on a single series (Hyndman, 2006).

However if you want to compare different series (with different units) it is not suitable. Also you should not use it if you want to penalize outliers.

import numpy as np

def mae(y, y_hat):

return np.mean(np.abs(y - y_hat))If you want to put more attention on outliers (huge errors) you can consider the Mean Squared Error (MSE). Like it’s name implies it takes the mean of the squared errors (differences between y and y_hat).

Due to its squaring, it heavily weights large errors more than small ones, which can be in some situations a disadvantage. Therefore the MSE is suitable for situations where you really want to focus on large errors.

Also keep in mind that due to its squaring the metric loses its unit.

import numpy as np

def mse(y, y_hat):

return np.mean(np.square(y - y_hat))To avoid the MSE’s loss of its unit we can take the square root of it. The outcome is then a new error metric called the Root Mean Squared Error (RMSE).

It comes with the same advantages as its siblings MAE and MSE. However, like MSE, it is also sensitive to outliers.

Some authors like Willmott and Matsuura (2005) argue that the RMSE is an inappropriate and misinterpreted measure of an average error and recommend MAE over RMSE.

However, Chai and Drexler (2014) partially refuted their arguments and recommend RMSE over MAE for your model optimization as well as for evaluating different models where the error distribution is expected to be Gaussian.

As we know from the previous chapter, scale dependent metrics are not suitable for comparing different time series.

Percentage Error Metrics solve this problem. They are scale independent and used to compare forecast performance between different time series. However, their weak spots are zero values in a time series. Then they become infinite or undefined which makes them not interpretable (Hyndman 2006).

The mean absolute percentage error (MAPE) is one of the most popular used error metrics in time series forecasting. It is calculated by taking the average (mean) of the absolute difference between actuals and predicted values divided by the actuals.

Please note, some MAPE formulas do not multiply the result(s) with 100. However, the MAPE is presented as a percentage unit so I added the multiplication.

MAPE’s advantages are it’s scale-independency and easy interpretability. As said at the beginning, percentage error metrics can be used to compare the outcome of multiple time series models with different scales.

However, MAPE also comes with some disadvantages. First, it generates infinite or undefined values for zero or close-to-zero actual values (Kim and Kim 2016).

Second, it also puts a heavier penalty on negative than on positive errors which leads to an asymmetry (Hyndman 2014).

And last but not least, MAPE can not be used when using percentages make no sense. This is for example the case when measuring temperatures. The units Fahrenheit or Celsius scales have relatively arbitrary zero points, and it makes no sense to talk about percentages (Hyndman and Koehler, 2006).

import numpy as np

def mape(y, y_hat):

return np.mean(np.abs((y - y_hat)/y)*100)To avoid the asymmetry of the MAPE a new error metric was proposed. The Symmetric Mean Absolute Percentage Error (sMAPE). The sMAPE is probably one of the most controversial error metrics, since not only different definitions or formulas exist but also critics claim that this metric is not symmetric as the name suggests (Goodwin and Lawton, 1999).

The original idea of an “adjusted MAPE” was proposed by Armstrong (1985). However by his definition the error metric can be negative or infinite since the values in the denominator are not set absolute (which is then correctly mentioned as a disadvantage in some articles that follow his definition).

Makridakis (1993) proposed a similar metric and called it SMAPE. His formula which can be seen below avoids the problems Armstrong’s formula had by setting the values in the denominator to absolute (Hyndman, 2014).

Note: Makridakis (1993) proposed the formula above in his paper “Accuracy measures: theoretical and practical concerns’’. Later in his publication (Makridakis and Hibbon, 2000) “The M3-Competition: results, conclusions and implications’’ he used Armstrong’s formula (Hyndman, 2014). This fact has probably also contributed to the confusion about SMAPE’s different definitions.

The sAMPE is the average across all forecasts made for a given horizon. It’s advantages are that it avoids MAPE’s problem of large errors when y-values are close to zero and the large difference between the absolute percentage errors when y is greater than y-hat and vice versa. Unlike MAPE which has no limits, it fluctuates between 0% and 200% (Makridakis and Hibon, 2000).

For the sake of interpretation there is also a slightly modified version of SMAPE that ensures that the metric’s results will be always between 0% and 100%:

The following code snippet contains the sMAPE metric proposed by Makridakis (1993) and the modified version.

import numpy as np

# SMAPE proposed by Makridakis (1993): 0%-200%

def smape_original(a, f):

return 1/len(a) * np.sum(2 * np.abs(f-a) / (np.abs(a) + np.abs(f))*100)

# adjusted SMAPE version to scale metric from 0%-100%

def smape_adjusted(a, f):

return (1/a.size * np.sum(np.abs(f-a) / (np.abs(a) + np.abs(f))*100))As mentioned at the beginning, there are controversies around the sMAPE. And they are true. Goodwin and Lawton (1999) pointed out that sMAPE gives more penalties to under-estimates more than to over-estimates (Chen et al., 2017). Cánovas (2009) proofs this fact with an easy example.

- Table 1: Example with a symmetric sMAPE:

- Table 2: Example with an asymmetric sMAPE:

Starting with Table 1 we have two cases. In case 1 our actual value y is 100 and the prediction y_hat 150. This leads to a sMAPE value of 20 %. Case 2 is the opposite. Here we have an actual value y of 150 and a prediction y_hat of 100. This also leads to a sMAPE of 20 %.

Let us now have a look at Table 2. We also have here two cases and as you can already see the sMAPE values are not the same anymore. The second case leads to a different SMAPE value of 33 %.

Modifying the forecast while holding fixed actual values and absolute deviation do not produce the same sMAPE’s value. Simply biasing the model without improving its accuracy should never produce different error values (Cánovas, 2009).

- As you have seen there is no silver bullet, no single best error metric. Each category or metric has its advantages and weaknesses. So it always depends on your individual use case or purpose and your underlying data. It is important not to just look at one single error metric when evaluating your model’s performance. It is necessary to measure several of the main metrics described above in order to analyze several parameters such as deviation, symmetrical deviation and largest outliers.

- If all series are on the same scale, the data preprocessing procedures were performed (data cleaning, anomaly detection) and the task is to evaluate the forecast performance then the MAE can be preferred because it is simpler to explain (Hyndman and Koehler, 2006; Shcherbakov et al., 2013).

- Chai and Draxler (2014) recommend to prefer RMSE over MAE when the error distribution is expected to be Gaussian.

- In case the data contain outliers it is advisable to apply scaled measures like MASE. In this situation the horizon should be large enough, no identical values should be, the normalized factor should be not equal to zero (Shcherbakov et al., 2013).

The margin of error is defined a the range of values below and above the sample statistic in a confidence interval. The confidence interval is a way to show what the uncertainty is with a certain statistic (i.e. from a poll or survey).

A statistical value that determines, with a certain degree of probability, the maximum value by which the results of the sample differ from the results of the general population. It is half the length of the confidence interval.

Предельная ошибка выборки (также предельная погрешность выборки) — статистическая величина, определяющая, с определенной степенью вероятности, максимальное значение, на которое результаты выборки отличаются от результатов генеральной совокупности. Составляет половину длины доверительного интервала.

Examples:

- For example, a survey indicates that 72% of respondents favor Brand A over Brand B with a 3% margin of error. In this case, the actual population percentage that prefers Brand A likely falls within the range of 72% ± 3%, or 69 – 75%.

- A margin of error tells you how many percentage points your results will differ from the real population value. For example, a 95% confidence interval with a 4 percent margin of error means that your statistic will be within 4 percentage points of the real population value 95% of the time.

A smaller margin of error suggests that the survey’s results will tend to be close to the correct values. Conversely, larger MOEs indicate that the survey’s estimates can be further away from the population values.

The margin of error is influenced by several factors, including the sample size, variability in the data, and the desired level of confidence. A larger sample size generally results in a smaller margin of error, indicating a more precise estimate. Similarly, a higher level of confidence requires a larger margin of error to account for the increased certainty.

The margin of error provides a measure of the precision and reliability of a sample-based estimate. It helps researchers and analysts interpret and communicate the level of confidence and uncertainty associated with the estimated values.

The code calculates the error (residual) between the actual and predicted values and adds it as a new column 'Error' in the DataFrame. Then, it calculates the mean and standard deviation of the error.

Next, you specify the desired confidence level (e.g., 95% confidence level) and use the stats.norm.ppf function from the scipy.stats module to calculate the critical value based on the confidence level. Finally, the margin of error is computed by multiplying the critical value by the standard error, which is the standard deviation divided by the square root of the number of observations.

import numpy as np

import pandas as pd

import scipy.stats as stats

# Example DataFrame with actual and predicted values

df = pd.DataFrame({'Actual': [10, 15, 20, 25, 30],

'Predicted': [12, 18, 22, 28, 32]})

# Calculate the error (residual) between actual and predicted values

df['Error'] = df['Actual'] - df['Predicted']

# Calculate the mean and standard deviation of the error

error_mean = df['Error'].mean()

error_std = df['Error'].std()

# Define the desired confidence level (e.g., 95%)

confidence_level = 0.95

# Calculate the critical value based on the confidence level

z_score = stats.norm.ppf((1 + confidence_level) / 2)

# Calculate the margin of error

margin_of_error = z_score * (error_std / np.sqrt(len(df)))

print('Margin of Error:', margin_of_error)The formula to calculate the margin of error is: Margin of Error = Critical Value * Standard Error

Here's an example code that calculates the margin of error given a sample size, standard deviation, and confidence level:

import scipy.stats as stats

import math

# Example variables

sample_size = 500

standard_deviation = 0.05

confidence_level = 0.95

# Calculate critical value

z_score = stats.norm.ppf((1 + confidence_level) / 2) # For a two-tailed test

critical_value = z_score * standard_deviation / math.sqrt(sample_size)

# Calculate margin of error

margin_of_error = critical_value * standard_deviation

print('Margin of Error:', margin_of_error)In this example, sample_size represents the size of the sample, standard_deviation represents the standard deviation of the population (or an estimate if it is unknown), and confidence_level represents the desired level of confidence (e.g., 0.95 for 95% confidence). The code uses the stats.norm.ppf function from the scipy.stats module to calculate the critical value based on the confidence level. It then multiplies the critical value by the standard deviation divided by the square root of the sample size to calculate the margin of error.

- Margin of error, Wikipedia

- Margin of Error: Formula and Interpreting

- Margin of Error: Definition, Calculate in Easy Steps

- Margin of Error: Definition + Easy Calculation with Examples

- Confidence interval

- Confidence interval

- Understanding Confidence Intervals

- Using the Empirical Rule (95-68-34 or (50-34-14)

- The Empirical Rule

| Title | Description, Information |

|---|---|

| Confusion Matrix in Python | Confusion Matrix in Python: plot a pretty confusion matrix (like Matlab) in python using seaborn and matplotlib |

| Title | Description |

|---|---|

| Least squares (Метод найменших квадратів, МНК) | The method of least squares is a standard approach in regression analysis to approximate the solution of overdetermined systems (sets of equations in which there are more equations than unknowns) by minimizing the sum of the squares of the residuals made in the results of every single equation. The most important application is in data fitting (curve fitting). The best fit in the least-squares sense minimizes the sum of squared residuals (a residual being: the difference between an observed value, and the fitted value provided by a model). When the problem has substantial uncertainties in the independent variable (the x variable), then simple regression and least-squares methods have problems; in such cases, the methodology required for fitting errors-in-variables models may be considered instead of that for least squares.Least-squares problems fall into two categories: linear or ordinary least squares and nonlinear least squares, depending on whether or not the residuals are linear in all unknowns. The linear least-squares problem occurs in statistical regression analysis; it has a closed-form solution. The nonlinear problem is usually solved by iterative refinement; at each iteration the system is approximated by a linear one, and thus the core calculation is similar in both cases. |

| Least Absolute Deviations (LAD) | Least absolute deviations (LAD), also known as least absolute errors (LAE), least absolute value (LAV), least absolute residual (LAR), sum of absolute deviations, or the L1 norm condition, is a statistical optimality criterion and the statistical optimization technique that relies on it. Similar to the least squares technique, it attempts to find a function which closely approximates a set of data. |

- The Elements of Statistical Learning: Data Mining, Inference, and Prediction.

- C.P. Robert: The Bayesian choice (advanced)

- Gelman, Carlin, Stern, Rubin: Bayesian data analysis (nice easy older book)

- Congdon: Applied Bayesian modelling; Bayesian statistical modelling (relatively nice books for references)

- Casella, Robert: Introducing Monte Carlo methods with R (nice book about MCMC)

- Robert, Casella: Monte Carlo Statistical Methods

- some parts of Bishop: Pattern recognition and machine learning (very nice book for engineers)

- Puppy book from Kruschke

- Mathematical Statistics

- Think Stats 2e

Correlation does not imply causation

-

Coursera:

-

Powerful Concepts in Social Science playlists, Duke

-

4 lectures on causality by J.Peters (8 h), MIT Statistics and Data Science Center, 2017

-

Causality tutorial by D.Janzing and S.Weichwald (4 h), Conference on Cognitive Computational Neuroscience 2019

-

Course on causality by S.Bauer and B.Schölkopf (3 h), Machine Learning Summer School 2020

-

Course on causality by D.Janzing and B.Schölkopf (3 h), Machine Learning Summer School 2013

-

Causal Structure Learning,Christina Heinze-Deml, Marloes H. Maathuis, Nicolai Meinshausen, 2017

-

JUDEA PEARL, MADELYN GLYMOUR, NICHOLAS P. JEWELL CAUSAL INFERENCE IN STATISTICS: A PRIMER

-

Causality in cognitive neuroscience: concepts, challenges, and distributional robustness

-

Investigating Causal Relations by Econometric Models and Cross-spectral Methods, 1969

-

Fast Greedy Equivalence Search (FGES) Algorithm for Continuous Variables

-

Greedy Fast Causal Inference (GFCI) Algorithm for Continuous Variables

- Causal Decision Trees, Jiuyong Li, Saisai Ma, Thuc Duy Le, Lin Liu and Jixue Liu, 2015

- Discovery of Causal Rules Using Partial Association, 2012

- Causal Inference in Data Science From Prediction to Causation, 2016

- Matching

- Incident user design

- Active comparator

- Instrumental variables estimation

- Difference-in-differences

- Regression discontinuity design

- Modeling