Although these conversations are frequently intertwined, building software away from a desk doesn’t have to come with a 4-digit daily bill. I don’t have that sort of cash, but I’ve still been able to get in on the fun.

The $20-$30/month workflow

Here’s a workflow that I’ve been using for unsupervised AI coding on a side project which will never justify serious focus or budget:

- I type one or two lines into a new issue on the project Github repo (usually from my phone)

- I add a label,

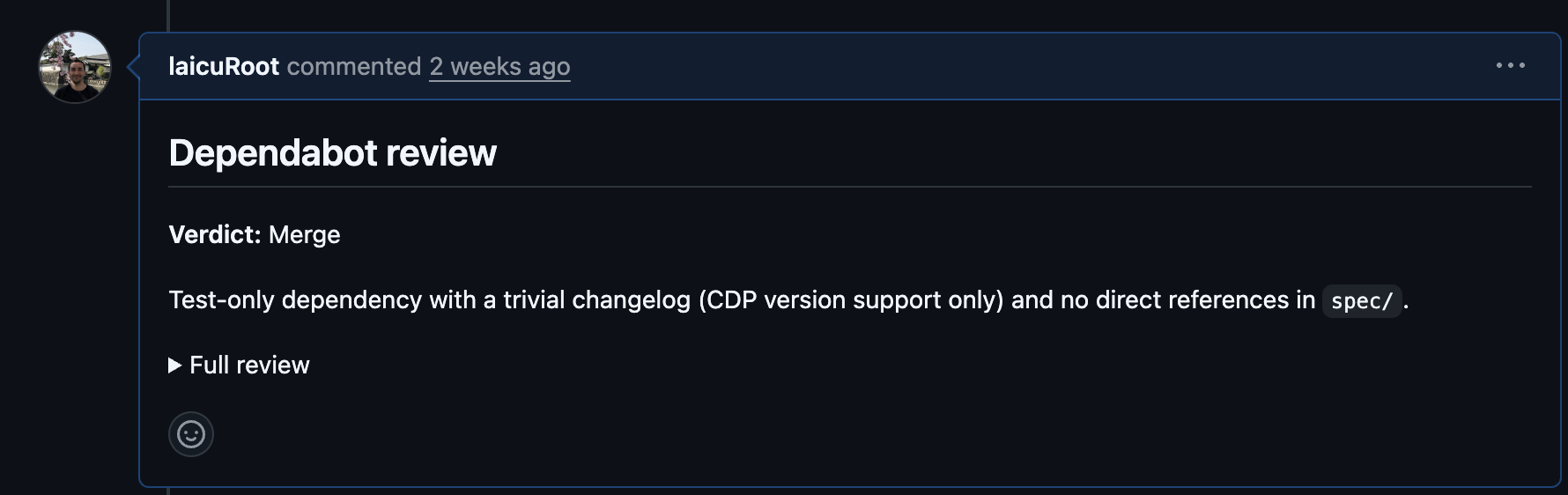

needs-elaborationto the issue - Github starts up an actions workflow that invokes Claude Code in the cloud to review the pre-existing code and write a plan for how to turn my few sentences into a reality

- I review the plan and if I’m happy I add a

ready-for-devlabel - Github starts Claude Code to implement the issue and raise a pull request

- I click on preview links that Vercel (this is a NextJS app) adds to the PR and inspect the results

- If I’m happy I merge the PR and Vercel deploys to production

- If I’m not happy I add a comment and an

agent-reviewlabel and GOTO 5

The total cost is $20 for my Claude Code Pro plan and $10 for a Github Pro Plan (educators and open source contributors can often get this for free). There are usage limits to both plans1 but I mostly run out of ideas before I run out of limits. I’m not focused on making an agent work 24/7 while I’m doing other things; my only concern is that there is something new ready for me each time I have spare attention for this project.

In case it wasn’t clear, I’m not writing or even reading any of the code as part of this loop2. That would take focus that I can’t dedicate on a per-feature basis to this project.

Minimal time and money for decent results

This project wasn’t always an experiment in agentic coding. It was originally an exploration of JS web development with NextJS that I pursued during Friday investment time over a seven month period a few years back. This allows me to compare before and after, with striking results.

In the past month, with 10 minutes here and there on my phone, I’ve far exceeded what I did in the original seven months of Fridays:

- features that have never existed in any previous equivalent software (from myself and others in this niche hobby space)

- huge improvements to UI including working mobile portrait mode and desktop modes

- 500+ tests and 77% coverage versus 0 tests and 0% coverage

- optimisations that allowed me to downgrade my Vercel account to a free plan

- GDPR-compliant analytics and error tracking

- extending my integration with Google auth from test-mode (allow-listed users only) to production (anyone can sign up)

Not bad for effort spent while out for a walk or on the London underground3.

How it works: Github workflows for cloud-hosted agentic coding

When I say “Github starts Claude Code in the cloud” it may not be clear exactly what I mean. Here’s an example of a workflow file from my project’s .github/workflows folder:

name: Claude Issue Triage

on:

issues:

types: [labeled]

jobs:

claude-triage:

if: github.event.label.name == 'needs-elaboration'

...

steps:

- name: Checkout repository

...

- name: Run Claude Code

uses: anthropics/claude-code-action@v1.0.70

with:

claude_code_oauth_token: ${{ secrets.CLAUDE_CODE_OAUTH_TOKEN }}

prompt: |

GitHub issue #${{ github.event.issue.number }} ("${{ github.event.issue.title }}")

requires your analysis...

claude_args: "--max-turns 20 --dangerously-skip-permissions"

show_full_output: "true"

Think of it like running a CI workflow in Github actions, except that instead of running against a branch or pull request, this workflow runs when an issue is labelled needs-elaboration. First it checks out the code, then it runs Anthropic’s Claude Code action. The action passes a fixed prompt to Claude Code plus metadata from the Github workflow (like the contents of the labelled issue).

It doesn’t have to be Claude Code, I could go with Opencode or the Copilot agent instead.

Notes for people unfamiliar with unsupervised agentic coding

If AI-coding terms like gastown, taskmaster, ralph loops, or minions mean nothing to you, these notes about what becomes different when we stop intervening in agentic code may be helpful.

First of all, the --dangerously-skip-permissions option on Claude Code may look scary, but it’s standard practice for folks who want to run Claude Code without any human intervention. I am less worried about doing this in a random github actions runner in the cloud than I am running it on my local machine. My laptop remains safe for my day job.

In unsupervised agentic coding, there’s a transition from “human in the loop” (approval of individual code changes that the agent makes) to “human on the loop” where the agent’s output over time is assessed and its standard instructions are improved. I’m not telling Claude which code to modify or how. Instead, it decides and I have to live with the results until I can give it what is needed to solve a problem in a better way the next time. This is a lot like being a manager of human coders (at least one who does not want to be a bottleneck to team output).

Some of the code in this project is really bad, for example a 1500-line JSX file. I only noticed this because of how Claude was struggling to implement changes to this code. Ideally I would have noticed this earlier, but the path to recovery is fairly straightforward. I’ll tell Claude to identify pieces of the component that can be split into separate components and files and have it create new github issues that the agents themselves can plan and implement. This is just how I would work as a developer myself (my threshold for breaking the code down would be somewhat earlier than 1500 lines though).

I wouldn’t trade the progress I’ve made in this month for perfect code. There’s a lightbulb moment for every developer when they work on software that they themselves want to use: suddenly the choice to pursue higher quality code has to fight for development priority with wanting to use a new shiny feature. The delicate balance is in knowing that with too much tech debt (e.g. 1500 line components), the shiny features will take longer and longer to build until eventually “improve quality then build new feature” takes less time than just “build new feature”.

A crucial factor is that the number of lines of code is no longer linked to the time it took to produce them. I often start by asking for options to address a problem. Out of five options, three may be easily rejected. When there are two or more realistic options, I don’t need to reason about them in the abstract: I can have Claude build both and see how each of them feels. There is no opportunity cost choice between one option and another.

Validation from Y-Combinator

The AI coding scene moves fast. As I was writing the first draft of this post I was puzzled that I hadn’t seen anyone else discussing a workflow like this. Between then and now Twill.AI announced a real product which seems like the same idea but with some professional features like more advanced sandboxing and integration with Slack, Whatsapp etc. that they’re betting are worth paying for.

Conclusion

Mine is a very low-stakes project which gives me freedom to experiment. There are serious gaps that I would need to close before applying this workflow to professional work, but I don’t think they’re insurmountable (certainly Twill.AI and many others are trying their best). I expect that over time I’ll be able to extend the agents’ work to close more and more gaps without breaking the bank. In the meantime, you can find me at the park with my daughter.

-

Your actions will just stop running if either Claude or Github plans run out. There are time-based limits that refresh over different periods. Claude is based on tokens and I use their cheaper tier of models (Sonnet) to make the limit go further. Github Pro Plans include an actions-based limit of 3000 minutes per month. I use much less than 3000 minutes per month on my side project. Github has recently changed their pricing model, but it is mostly with regards to AI token pricing, and in this case I’m getting my tokens directly from Anthropic. ↩

-

Before you worry about the safety of running code without reading: there is no backend, no personal information tracked, and all data is stored in a user’s browser or in their own Google Drive. Also note that I don’t read code per-feature during the loop, but I do get to look at the code as a whole over time. ↩

-

A few weeks back I was watching people play a game that made me think of a new feature. I took out my phone to write an issue, then carried on watching. By the time I was home, the feature was ready in production. ↩