What is LocalCloud?

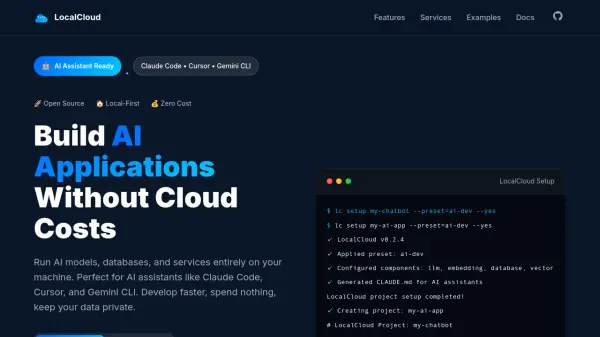

LocalCloud is an open-source platform designed for local-first AI development, allowing developers to run AI models, databases, and services entirely on their own machines. It orchestrates popular tools like Ollama for LLMs, PostgreSQL with pgvector for databases, Redis for caching, and MinIO for storage into a cohesive environment. This eliminates cloud costs, ensures data privacy, and supports AI assistants such as Claude Code, Cursor, and Gemini CLI with non-interactive setup options.

The platform includes features like secure tunneling with Cloudflare and Ngrok, vector search for RAG applications, and auto-generated documentation. With presets for different development needs, LocalCloud enables rapid setup in under five minutes, making it ideal for building AI chat applications, RAG document search systems, and API backends without relying on external cloud services.

Features

- Local AI Models: Run Llama, Mistral, Qwen, and other LLMs locally with OpenAI-compatible API using Ollama

- Multiple Databases: PostgreSQL with pgvector extensions and MongoDB for flexible data storage and vector search

- High Performance: Redis caching and job queues for lightning-fast applications

- Object Storage: S3-compatible storage with MinIO for files, images, and documents

- Secure Tunneling: Share work instantly with Cloudflare and Ngrok integration

- Vector Search: Built-in RAG support with pgvector for semantic search applications

- AI Assistant Ready: Non-interactive setup perfect for Claude Code, Cursor, and Gemini CLI with auto-generated documentation

- Component Control: Precise control over infrastructure with customizable components and models

Use Cases

- Building AI chat applications with conversation history and memory

- Creating RAG document search systems with semantic search over documents

- Developing AI API backends with REST APIs, background job processing, and caching

- Running local AI models for development and testing without cloud dependencies

- Setting up vector databases for embeddings and semantic search applications

FAQs

-

What operating systems does LocalCloud support?

LocalCloud supports macOS, Linux, and Windows operating systems. -

How long does it take to set up LocalCloud?

LocalCloud can be set up in under 5 minutes with its quick start process. -

What AI models can be run with LocalCloud?

LocalCloud supports running Llama, Mistral, Qwen, and other LLMs locally through Ollama with an OpenAI-compatible API. -

Is LocalCloud suitable for production environments?

LocalCloud is designed for development and testing, enabling local-first AI applications without cloud costs, but users should assess suitability for production based on their needs. -

What presets are available in LocalCloud?

LocalCloud offers presets such as ai-dev (AI + Database + Vector + Cache), full-stack (all services + Storage + Tunneling), and minimal (AI models only).