Title

Snake Implementation using Deep Q Learning (Reinforcement Learning)

Who

- Eseoghene Ajueyitsi - eajueyit

- Kendra Lee - klee165

- Daniel Li - dli72

- Ezra Rocha - erocha1

Introduction

We are implementing a reinforcement learning version of the game Snake. Snake is a video game where the player maneuvers a growing line that becomes a primary obstacle to itself. The snake gets longer by eating the snacks on the board. In addition to being unable to run into itself, the snake cannot run into the wall either. The goal in the game is to get as long as possible. We are planning to utilize reinforcement learning to create a bot that should be able to play the game.

Related Work

Finnson and Molno's paper mentions Deep Q-Learning: a combination of the Q-Learning algorithm and deep neural networks that is used to approximate Q-value function and policies. Deep Q-Learning is the training algorithm we will apply for the Snake game. How the RL agent is able to improve and learn its moves while it plays the game is by having it consistently observe the state of the game, make a decision in the game, and reward the agent accordingly for the decision made. The agent would want to optimize/maximize the reward as it continues the game so that it can make more optimal decisions. The agent’s main goal is to find an optimal policy in which it maximizes the cumulative reward during a series of episodic tasks/decisions. Q π (s, a) = Eπ[Gt | St = s, At = a] : this is the Q-value function of a given policy π. In the paper, it explains that the Q-value function will be maximized. Q(st, at) ← Q(st, at)+ α rt+1 + γ max a0∈A Q(st+1, a0 ) − Q(st, at) : The paper explains this function as the update rule where the computation of the Q-value function is done by approximating the Bellman equation for the Q-learning algorithm. This allows the agent to take advantage of what it already learned from previous actions that it took to make better decisions even when put in a future environment that it hasn’t seen before. The Q-learning algorithm stores the Q-values in tables and combines them with a deep neural network such that the network will approximate these Q-value functions to compute the agent policy. To relate it to the Snake game, the neural networks will aid the agent in the learning process through recurring training states since the agent is initially put in a state in which it has no prior knowledge about the most optimal policy to maximize its rewards. To go into specifics, the paper summarizes the snake game’s agent using DQN by first having the Q-value randomly initialized. Then, for each epoch and visited state, the agent performs an action “by using the exploration-exploitation trade-off” and is given a respective reward (can be negative or positive). The agent keeps track of the visited state and its reward corresponding to its decision/action until the game has ended. After a certain number of epochs (likely a large number) the neural network is trained. The resulting neural network allows our trained bot to play optimized games of Snake.

Data

This project will not require a dataset for functionality. This is because we are using reinforcement learning which depends on an agent, the environment, along with different rewards that we give the agent based on its performance.

Methodology

How are you training the model?

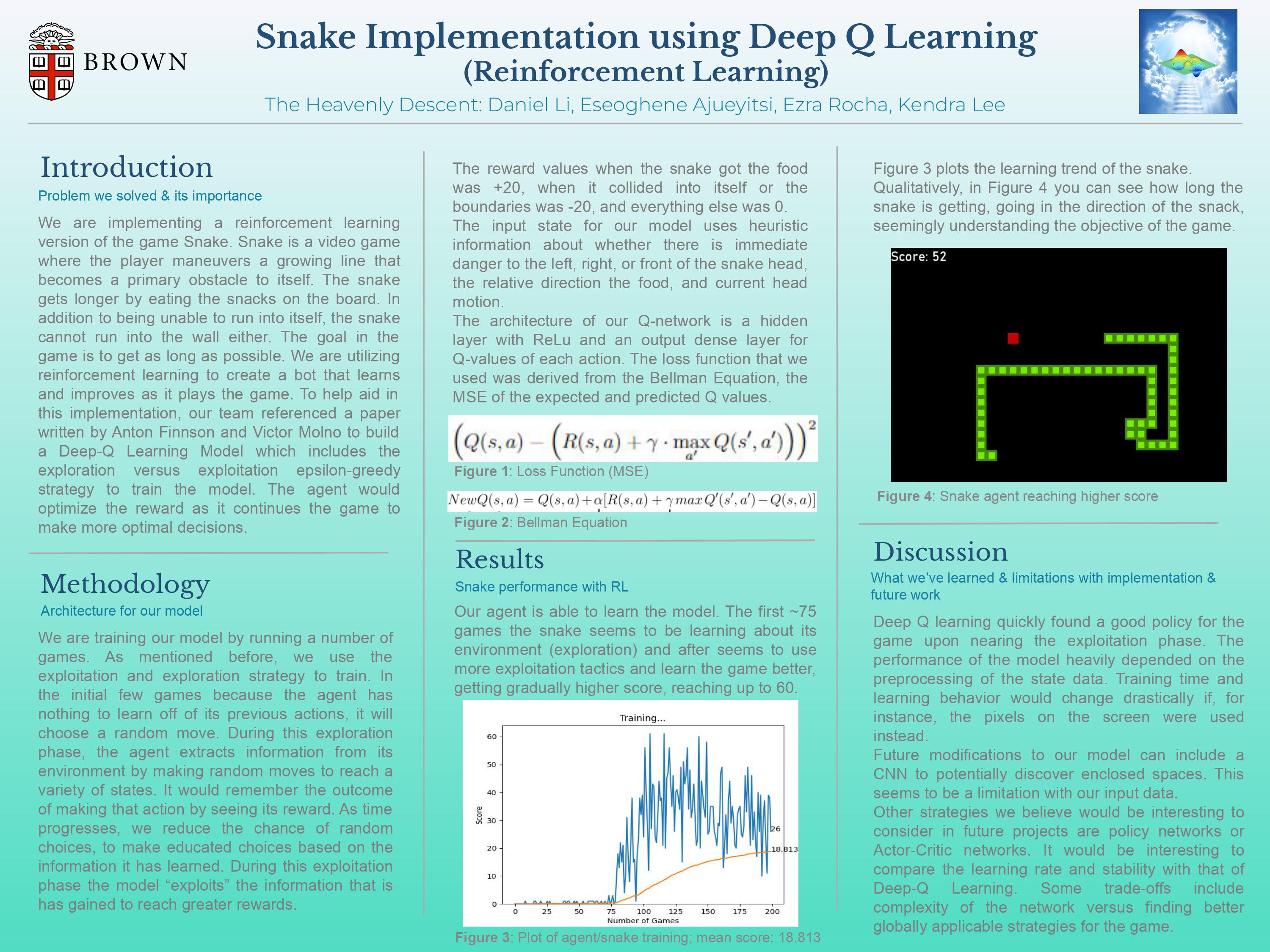

We are going to train the model by running a number of games. The plan is to use an exploration versus exploitation strategy to train the model. In the first few games, we plan for the model to choose a random move. This is going to be the exploration phase of the algorithm. We do this because we want the model to get as much information about the environment as possible. As time goes on, we want the model to make fewer random choices and to make choices based on the information that it has learned from the environment. This is called exploitation since the model is going to “exploit” the information that it has gained from moving around in the environment.

There are several challenges that we are going to face while implementing this model. The first one is going to be reinforcement learning itself since we are covering this topic late in the year. This means that a lot of our knowledge about how reinforcement learning works is going to come from outside sources, and it is going to be self-taught. Another challenge that we are likely to face is finding the right architecture for our deep-q model. Finding the best implementation for our hidden layers, allowing the snake to perform at its best, is going to be challenging since we need to consider that the amount of time it takes to train the snake will increase dramatically if we are not careful. This issue involves not only experimenting with the architecture but also optimizing the hyperparameters, which will likely take some time.

Metrics

What constitutes “success?” What experiments do you plan to run? What are your base, target, and stretch goals?

For this project, we do not have a notion of accuracy since we are training a bot within a game. Instead, we are going to be using a score given by our chosen algorithm to measure how well the snake (i.e the bot) is performing. After a certain number of games, we are hoping that the snake will develop a strategy that will allow it to survive as long as possible in the game, moving while avoiding bumping into edges and itself. This will be enforced by prioritizing having the snake earn a high score, a process that should improve performance as we train our model. We are not sure of how long it is going to take to train the model, but our hope is that the snake will eventually perform to a point in which it will avoid frequent bumps and will cover around half of the board. Even so, we do expect the record score to flatline after a certain number of games since, after a certain point, the model could be unable to improve. A reach target might be to have our snake bot reach a certain score that coincides with the snake taking up at least 80% of the board. In terms of experiments, we are going to keep track of how well the agent plays in the beginning, along with how much it improves. We hope to use matplot to plot the necessary data for us which will allow us to see how the model is performing.

Ethics

Why is Deep Learning a good approach to this problem?

On its own, reinforcement learning is capable of storing information of several states in a model (e.g. a game) such that we end up with a large dataset of state, action, and reward tuples. However, the more complex a model becomes in terms of possible states and actions, the more difficult and impractical it becomes to use standard RL implementations. Because of this, adding neural networks to a foundational RL approach allows for our model’s agent to explore a significantly larger number of actions. Rather than store all possible movements in memory, we instead train our model to estimate the best actions, a process that takes several iterations to perfect. We also wanted to explore the benefits of using neural networks with RL for an accessible game model to gain an understanding of how larger-scale RL problems may be implemented in other contexts.

How are you planning to quantify or measure error or success? What implications does your quantification have?

As with most RL problems, we refer to the quantification of success as "reward" (discussed in earlier sections of this outline). During the implementation of our project, we will experiment with the values we choose to determine the reward system of our model since these will ultimately affect the performance of our snake agent. At the scale of our project, the only implication of our reward system is that the snake bot should eventually not be allowed to make mistakes, or at least not as many as in the beginning, to emulate a sophisticated AI bot that is meant to play the game as long as possible. At the scale of other applications of RL implementations, reward systems may have more consequences. For example, if we are trying to create a robot that will assist humans in some industry, we will want the risk of our bot performing its tasks poorly to be at a minimum, otherwise we risk creating setbacks and/or hazardous situations for workers.

Division of Labor

- Poster: everybody will contribute to this

- Ese: Game (implementing the interface of the snake game taking in both the Model and the Agent)

- Kendra: Model (essentially, the Q-learning model discussed in this outline)

- Ezra: Agent (the snake bot, whose behavior will both update the training model and will be changed by the model)

- Daniel: Model training/loss function/hyperparameters

Mid-Way Reflection

DL Reflection - Our Progress So Far

Poster

DL Final Poster Google Slide Version

DL Final Poster Google Slide Version

Recording

DL Final Recording Video - Teaser Presentation

Log in or sign up for Devpost to join the conversation.